The craft of knowledge-work, the role of theory and the challenge of scale

If enterprise-architecture is a kind of craft – part art, part science – then how do we get it to scale? Is it even possible to get it to scale? – because if not, the whole enterprise of enterprise-architecture itself is doomed to failure…

What triggered this one was a Tweet yesterday by Catherine Walker:

- transageo: Crafts don’t need theory (@danieldennett). Scaling needs theory (@snowded). Scaling moves away from craftsmanship, towards standardisation.

If we compare this to what’s happened over the years in the disciplines of enterprise-architecture, there’s been an increasing awareness that whilst we definitely do need standardisation, it can also cause as many problems as it solves. As I understand it, the challenge for enterprise-architecture is not just about creating ‘sameness’ across a large scale, but about an appropriate balance between ‘same and different’ – the right balance between control, complexity and ‘the chaotic’ – across the respective scope and scale.

But if the logic of Walker’s tweet is correct, then the only thing that we will be able to do at scale is standardisation – the kind of ‘complexity-reduction’ promoted by Roger Sessions, for example. ‘Sameness’ scales, ‘difference’ doesn’t – that’s what this seems to say. And that’s pretty much an existential-challenge for the validity of enterprise-architecture: it suggests that EA might well work at small scale, but as we expand the scope and scale, the art of enterprise-architecture – and perhaps even the science too – would necessarily fade into nothingness, replaced solely by standardisation.

Which for me is a worry, because to me the art – the craft – of EA is what makes it all worthwhile.

So yeah, I needed to think a bit about this one…

And if we do think about it, it’s clear that that tweet is framed as a formal-logic syllogism:

- Premise 1: Crafts don’t need theory.

- Premise 2: Scaling needs theory.

- Inference: Scaling moves away from craftsmanship, towards standardisation.

Given that, then if there are any questions about the validity of either of the premises, or of derivation of the inference, then it would imply that other interpretations are possible – and if so, those other interpretations might still leave a space for the ‘craft’-aspects of enterprise-architecture at scale.

[An aside: for this purpose, I’ll assess those premise- and inference-statements in their literal form above; but to be fair to Catherine, it’s really important to acknowledge that she’ll have almost certainly had to over-compress the intended idea to squeeze it into the 140-character limit. What she’s shown up in that syllogism is an important question in its own right, but I’m not going to say “Catherine is wrong!”, because she probably isn’t. 🙂 ]

So let’s do this step by step:

— “Crafts don’t need theory”

I don’t know the context for Daniel Dennett that Catherine Walker referred to for this, but to me there are two clear avenues here that we could explore:

- “crafts don’t need theory” is not the same as “crafts don’t have theory”

- a lot depends on what’s meant by ‘theory’…

The first of those two points has obvious implications for the implied assertion that craft can’t be scaled. If Premise 2 is correct – “scaling needs theory” – then crafts that don’t have theory can’t be scaled; but crafts that do have theory probably can be scaled.

Which brings us to the next point: if craft can have theory, what does that look like? Would it always take the form of standardisation? And what is ‘theory’, anyway?

So let’s take those questions in reverse-order.

Theory is… well, it’s theory, innit? In other words, yep, it’s another of these blurry words that we keep tripping over in enterprise-architecture. There are lots and lots of different definitions, but the one I’ll pick here is this: theory is a means to guide how we make sense of something, and then decide what to do. We can have all manner of theory: sometimes the theory is good, and sometimes it might not be very good at all, in the sense of lining-up with what actually happens in the real-world – the quality of theory, or appropriateness of theory, often depends as much on the context it’s applied to as on the structure of the theory itself.

And if we take a sensemaking-framework such as Cynefin or SCAN, then it should be clear that ‘theory’ doesn’t just belong in one place, one domain: it’s everywhere. The nature of theory, and the appearance of theory, is different in each of the domains in those sensemaking-frameworks: for example, Cynefin draws very important and explicit distinctions between the theory of ‘control’ versus the theory of complexity – yet in both of those domains it’s still theory, in the sense of ‘a means to guide sensemaking and decision-making’.

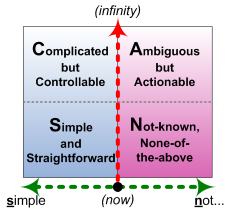

So let’s interpret this in terms of the SCAN frame:

As I’ve said elsewhere, the left-side of the frame is about certainty and repeatability, whilst over on the right is variance and uniqueness; the vertical axis is about how the underlying mechanisms for decision-making will (and, usually, must) change as we move downward towards real-time. If we map the various forms of ‘means to guide sensemaking and decision-making’ onto this frame, we can summarise the result roughly as follows:

- Simple (real-time, predictable): rules, supported by definitions

- Complicated (‘considered’, predictable): algorithms, supported by formulae and standards

- Ambiguous (‘considered’, uncertain): patterns or guidelines, supported by abstract meta-theory to contextualise those descriptive patterns

- Not-known (real-time, uncertain): principles, supported by embodied meta-theory to contextualise those normative principles

One useful SCAN cross-map is in terms of skill-levels:

The type of theory that a trainee would rely on is likely to be very different from the theory that a master would use: yet in both cases it’s still in the same overall domain or continuum of theory – and still theory, too.

Likewise if we do a cross-map to CLA, or Causal Layered Analysis:

The nature of the story will change within each layer of the analysis, but again it’s still all in the same domain-continuum, and all of it is ‘theory’ in the sense of ‘guide to sensemaking and decision-making’.

So to come back to the original syllogism, what this shows us is that standards and suchlike are just one particular class of theory, that would mainly apply in one particular aspect of the overall sensemaking / decision-making space – over towards the ‘control’ side or left-side of the frame, if we’re using SCAN as the descriptor here. Hence to describe standards as ‘theory’ – or, worse, as the only valid form of ‘theory’ – could be more than a bit misleading, especially if the context is is more ‘in’ a different sensemaking-domain.

To give a practical example, consider knitting. Yes, it’s a craft – yet it does still have distinct theory, with its own distinct mix of rules (‘knit one, purl one’), algorithms (which, confusingly, are usually called ‘patterns’), guidelines (how to adapt a pattern for different body-shapes and sizes) and principles (how to turn a ‘mistake’ into a joyous feature of the garment). There are standards – needle-size, thread-size, body-size, and much more – that help in decision-making in the craft, but they don’t determine the craft: that distinction is probably quite important.

We’ll come back to that later, anyway; for now, let’s move on to the second premise.

— “Scaling needs theory”

For once, I’m going to agree strongly with Dave Snowden here: I can’t see how it’s possible to scale without theory.

Whether we’re dealing with control, complexity or ‘chaos’ – uniqueness – we’ll need appropriate theory to match. And whether we’re going to embed the scaling into machines or IT-systems or the like, or engage large numbers of people in an idea or an intent, whatever we do is going to involve and rely on the scaling that’s made available by theory. We need theory in order to be able to scale: the only question is which type of theory applies in each case.

And the answer to that question is much as summarised above: the theory we’d use, and type of theory we’d use, depends on what we’re trying to do. To use Cynefin terms, theory for the Complicated domain – standards and suchlike – is often significantly different from the complexity-theory we’d use in Complex contexts. For example, ‘truth’ is definable in the former, but more a matter of probabilities in the latter; standards and certainties and ‘provable’ linear-algorithms aren’t much help in complex wicked-problems where the context can change just by very act of looking at it.

Just as the theory is different in each case, so too is the nature and means of scaling. For the Complicated domain, standardisation is one of the means available to us for scaling: for example, in enterprise-architecture we’d often try to standardise some aspects of language or terminology, or specify particular protocols for use in specific circumstances. Yet as we move more towards uniqueness and uncertainty – the Complex domain in Cynefin, the Ambiguous or Not-known domains in SCAN – then standardisation will often actually prevent us from scaling, because it can’t cope with the types difference that always occur across larger scales, especially in any human-oriented context. In those kinds of of domains, we need meta-theory, meta-frameworks, what we could almost call meta-standardisation – the sort-of-standards for thesauri and suchlike tactics for how to work where standards alone don’t make sense.

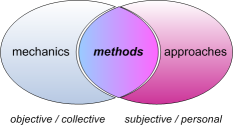

To use another SCAN cross-map, this time in terms of skills:

Standardisation largely assumes that methods are derived only from the mechanics of the context – the ‘objective / collective’ elements of the action. But once we acknowledge the contextual and ‘subjective / personal’ nature of skill, and its ability to adapt to difference in order to achieve the desired result, then we also need to acknowledge a contextual balance between methods, mechanics and approaches – that the appropriate methods for each context depend on an appropriate mix of mechanics (‘objective’) and approaches (‘subjective’). Which means that we need appropriate theory both for the mechanics and for the approaches that underpin the respective skill.

To go back to that knitting example, we’d often use standards when working on our own, but also our own judgement as well – which is what makes it a craft. But if we want to scale it up – for a knitting-bee, perhaps, or even for some broad collective such as Teddies For Tragedies – then we’d use a mix of predefined standards (‘objective’) and patterns and variance for individual skill (‘subjective’). In that type of context, the theory includes variance: as a sort-of-standard, it includes both standards and the bounded absence of standards. And it wouldn’t work – wouldn’t scale – unless it did support that variance.

Scaling needs theory: but a lot depends on what we mean by ‘theory’.

Which brings us to that initial inference from the syllogism:

— “Scaling moves away from craftsmanship, towards standardisation”

The simplest way to put this is that there’s a vast amount of ‘It depends…‘ here, not least on:

- what we mean by ‘scaling’

- what we mean by ‘craft’ or ‘craftsmanship’

- what we mean by ‘standardisation’

- what we mean by ‘away from’

- what we mean by ‘towards’

If our aim is to create an industrial product, and need identicality in that product, then yes, we’ll need standards and standardisation. To take a military example, it was almost impossible to scale the supply of armaments when every cannon had a different bore, rifles were so much hand-made that there was almost no interchangeability or substitutability for spare-parts: standardisation was the crucial manufacturing-revolution that gave the Union side the upper-hand in the US Civil War, and, later, the Allies in the Second World War.

Yet that use of standardisation only applies for scaling of things that need to be identical – or can be identical. When some things are inherently different – as they are in healthcare, for example, or even in knitting – then misuse or misapplication of standardisation will prevent scaling, because it tries to force things to fit assumptions that don’t actually apply.

I gather that, in talking about ‘Scaling needs theory’, Snowden referred to craft and standardisation in the context of the old Guild structures, and the way that those guilds tended to rely more on tradition and ‘received knowledge’ rather than systematic theory – and hence, yes, tended to block scaling, both for that reason, and also as a means to protect their own power and prestige in the respective industrial context. Those are important considerations, yes, but I tend to view those concerns more in terms of power-problems or related-themes such as purported ‘rights‘ in a possession-economy – in other words, more about the nature of politics than the nature of theory. From a theory-perspective, what I would look at more is how a ‘guild’-type culture tends to develop naturally whenever there’s a high degree of inherent-variance or ‘unorder’ in the context – because the ‘guild’ is a key social means via which theory can be shared, and hence scaling can be enabled, wherever there is variance, of skill, competence or context.

In practice, we see a ‘guild’-type structure develop in the business-context wherever there’s a need for knowledge-sharing and skills-sharing in order to support scaling of some kind of ‘craft’ – or, in more business-oriented terminology, a ‘profession’. (In fact it’s the lack of possibility for overall standardisation that distinguishes a ‘profession’ from a Taylorist-style predefined ‘job’.) We’ll see each profession develop its own ‘body of knowledge’ (BABOK, PMBOK, EABOK and all those other ‘..BOK’ frameworks we’ll see around the business-domains), its own communities-of-interest and communities-of-practice – which represent the key means by which that knowledge and skillset can scale.

Yes, to echo Snowden’s concerns above, we’ll also see the downside of ‘guild’-type structures in some of those cases: blatant power-games and exclusion-tactics, for example, attempts at legally-enforced monopolies, or certification-schemes that might be better-described as certification-scams… Yet beyond those problems, then yes, standardisation is valid for the ‘certainty’-side of the context – shared core-terminology, for example – but is not valid for the ‘uncertainty’ side: such as the absurdity of using a predefined multiple-choice exam as a means to test for competence at adapting a framework to contextually-dependent needs. In many cases there’s even a real danger of a near-inversion of that initial inference: standardisation moves away from scaling of craftsmanship.

To bring this back to enterprise-architecture, and the need to scale enterprise-architecture such that it can and does become the responsibility of everyone in the enterprise, we need to be very clear about applying the appropriate type of theory for each context. Sometimes we need standardisation; sometimes we do need rules, predefined algorithms, predefined formulae. Elsewhere, though, we’ll need theory in the form of patterns, guidelines, principles – and the meta-theory of how to apply those in different ways as the context itself undergoes change.

Enterprise-architecture is a kind of craft, a dynamic blend of art and science – and needs the right kinds of theory from each of them, in the right mix, in order to scale and to work well. But which theory, you might ask? – which is ‘the right theory’, for each circumstance? Well, that’s where we come back to that much-dreaded phrase ‘It depends…’; and, in turn, why enterprise-architecture is necessarily a ‘craft’ – a ‘craft’ that can indeed expand to any needed scale.

Hi Tom,

Great post. Applaud the direction.

This is an area long debated in Computer Science and in particular Artificial Intelligence.

Scalability (efficient repeatability or automation) is based on theory (models of an activity). Models provide a definition that can be used repeatedly (data model, flowchart, service interface, etc.) – this is the heart of Scientific Management, everything can be reduced and optimized (if only we could ever get the models right…).

When models are conflated with implementation or ‘use’, we ‘bake-in’ the context of the model designer and preclude context of the model user. This promotes standardization/consistency/efficiency (Taylorism) over contextualization/variance/effectiveness (Drucker).

The problem is compounded in the modern information system, where complex applications depend on the interaction between models. If models are all static, changing one model breaks dependencies with other models. The more complex the applications the more brittle they become – and here we are.

It doesn’t have to be this way, if we separate models from their implementation we get ‘plans’. The separation allows the model to be utilized to the extent useful, but supports variance (user-driven modification, or system-driven personalization). This can be extended to modification of the models themselves and adaptation of the architecture (user-driven modification, or system-driven machine-learning).

So we don’t have to look at the issue of craft vs theory-driven scalability. To address challenges of Enterprise Architecture we need to separate models from implementation, removing bias and control of central designers as a fixed concern and providing the latent potential for continuous improvement / adaptation (even if logically constrained by rules, but not structurally constrained by accidental architecture).

http://www.scribd.com/doc/48094484/Putting-Work-in-Context-Duggal-Malyk-COCOA2010-120610b

http://www.infoq.com/articles/social-lean-agile

Best,

Dave

Thanks, Dave – much appreciated. 🙂

@Dave: “Scalability (efficient repeatability or automation) is based on theory (models of an activity).”

I think I’ll have to pull that statement out as a core for another post, because it triggers a quite different thread here: I think we need to expand the meaning of ‘scalability’ here. (It doesn’t detract at all from what you’ve said following that statement, with which I can only concur.)

@Dave: “To address challenges of Enterprise Architecture…” etc

My quick response is that at present I’d say I’m certain you’re right. The catch is that I haven’t gone into that as much as you have, and I need to do so before I can give much more than that rather glib answer. Give me a bit of time on this, if you would? – thanks again, anyway.

Sounds good Tom. I believe we’ve been exploring the same issues, but from different perspectives (EA/EITA).

At the risk of demeaning an excellent article and commentary on a very real problem I wonder if we are not over-complicating what is potentially a simple issue?

EA has issues on several fronts and the notion of craft vs. science, cottage industry vs. industrialisation has been a vexing one for some time and tends to confine itself to single issues – e.g. weaving and cloth-making; pottery; art; etc. Noting that EA is multi-disciplinary (and I am not suggesting that the examples I gave are not but rather they tend to be within a single domain of expertise) I think we are dealing multiple “crafts” that, when taken together, form a larger whole and require the ability to scale multiple domains of expertise.

Some of the domains within EA – e.g. process, information and technology – render themselves more liable to industrialisation and scaleability than, say, the softer domains such as organisational design, roles and skills definition and delivery are less capable of said industrialisation. Again, I am not saying we have successfully industrialised or scaled the “harder” elements of EA but rather suggest that they are the ones more likely to take on such properties.

So, are we not caught between a rock – the “harder” EA disciplines that are more likely to scale – and a hard place – the “softer” EA disciplines where scale and industrialisation are more unlikely? If this is so and we accept the multi-disciplinary nature of EA (which you may not) does this not suggest that EA, when seen as a whole, is not capable of scaleability or moving from the craft arena?

With my simple view of the five strands of EA being People, Organisation (Communities), Process (Services in more modern language), Information (including Knowledge and Data) and Technology – POPIT – I know where I can apply the kind of rigour and control needed to undertake industrial-like change and control – (P)IT. However, I am also fully aware that such tactics cannot be applied to the softer elements – PO(P) – and therefore need to employ other, more adaptive techniques.

But, then again, this may be too simplistic a view as I “get” where you and Dave are coming from. And you have probably expressed this better than me 🙂

Carl

Carl – thanks for this. (And no, I don’t think you’re being ‘too simplistic at all’. 🙂 )

The point I’m really on about here is that whilst scalability of repeatable-things is (relatively) easy, we’re perhaps a bit too quick in parking scalability of non-repeatable things into the ‘too-hard basket’ – I really do believe we could do better with a bit more thought about the nature of ‘non-repeatable things’.

More on that in the next post, anyway.

Here are some thoughts that continually rolled through my mind as I read this blog. I had to concentrate to finish the blog because my mind was wanting to explore competing thoughts and take things in a different direction!!

I wanted to think about a craftsperson and a craftsgroup. Craft is largely applied by individuals, working solely on a single product. When I think of craft – I think of knitting or sculpting or … Largely, I think of individuals applying their knowledge, skill and abilities to an undertaking. It might take years, like the Sistine Chapel, or it might take hours, but it is most often done by a single individual.

Groups are most often gatherings of people working on individual, unrelated products. Yes, there is a common technique which is applied, but it is applied by each individual to their particular circumstance and undertaking.

Yes, there can be mutual undertakings when a group creates a shared product – a quilt – but then there is a design, a pattern for integration of the individual products into the whole.

So, there is knowledge (theory) that is shared to enable individuals to share how they create independent products, and then there is knowledge shared to create an integrated product.

We don’t speak of the art of car manufacture, do we? The car is an example of a large scale product and undertaking, which is entirely different from the large scale undertaking of the Sistine Chapel.

So, I think the key to this is about the need for integration in one dimension and the value of shared knowledge in another dimension.

Art is about shared tacit knowledge – that which cannot be made explicit and will require the act of a person to create the product. Art reflects the capacity of the person to produce an item beyond the underpinning rules, standards, etc. Art requires observation – it is the apprenticeship part beyond the theory.

Perhaps this is where things like understanding, judgement and wisdom come into play?

Hmm… I do take your point(s), yet not quite sure about this…

An awful lot of this depends on one’s chosen interpretation / definition of ‘craft’. There’s a very real risk of circular-definitions here – such as ‘craft-work is craft because it’s done by individuals; craft is only done by individuals, not groups’, and so on.

@Peter: “Groups are most often gatherings of people working on individual, unrelated products.”

If we go back to Snowden’s points above – and again, I do agree with him on this – then we can understand mediaeval guilds as a mechanism both via which the knowledge (‘theory’) of a craft is passed on (and also controlled/withheld), and also via which large-scale work of that type can be coordinated. The creation of a cathedral such as Chartres didn’t ‘just happen’: it took a vast amount of coordination, skills-management and skills-transfer, often involving tens, hundreds, even thousands of highly-skilled craftspeople, stretching over decades and into centuries.

It’s still production at scale – sometimes very large scale, such as in the ropewalks that manufactured almost all naval-rigging up until the early 19th-century. And yet it’s not the same as the mass-mechanisation that came in during the 17th and, even more, the 18th-centuries, culminating in the vast ‘manufactories’ of the 19th and 20th centuries: mass-mechanisation is ‘sameness’ at scale, whereas craftwork and guildwork managed ‘difference’ at scale.

It was only in the later part of the 20th-century that we started getting successful methods that combined both ‘sameness-at-scale’ (mass-production) and ‘difference-at-scale’ (craft-production), into techniques such as mass-customisation (more at the ‘sameness’ end) and massive scientific-research projects (more at the ‘difference’ end).

Perhaps the simplest way to put it is that in the business-context much of would previously have been called ‘craft’ has morphed into what we would know call ‘knowledge-work’, or the work of a ‘profession’. In effect, although these days they’d put a bit of a ‘science’ spin on it, the professions have taken on much of the old mantle of the guilds – including the same kind of ethically-dubious protectionist attitudes that Snowden warned about…

If we look around in the present day, we’ll see a lot of ‘craft’-type work applied at large scale. Computer-programming is one of the more obvious examples – think of the tag-line of the WordPress community, that “code is poetry”. Our own work in EA has a much stronger linkage to the craft tradition (working with difference) than it does with the manufacturing tradition (working with sameness): the latter is more the focus of disciplines such as CoBIT or Six Sigma.

@Peter: “We don’t speak of the art of car manufacture, do we?”

Maybe no, maybe yes. If you talk with the people who design manufacturing-lines, or even with the people who operate them and keep them running on the shop-floor, you’d probably get a rather different answer than from those managers and others who think they ‘control’ the overall process…

@Peter: “Art requires observation – it is the apprenticeship part beyond the theory.”

Same applies to engineering, too. (And science, for that matter: which is where I throw in yet another plug for one of my favourite books, Beveridge’s The Art of Scientific Investigation 🙂 ).

@Peter: “Perhaps this is where things like understanding, judgement and wisdom come into play?”

Yep. And almost by definition, they don’t (and shouldn’t) come into play in science when presented as ‘truth’: yet do very definitely come into play into the practice of science. (See Beveridge again for more on that.)

Peter’s comments remind me that I have been trying to understand the relationship between art, craft, and science for a while now, though not with much success.

I have, however, homed in on a simple two by two model that does, at least for me, make some useful distinctions. The two dimensions of this model are (sort of) whether the idea of utility is important (i.e., is it “practical” or not), and whether the experience is subjective or objective. The four combinations work out thus:

not practical/subjective – art

not practical/objective – science

practical/subjective – craft

practical/objective – engineering

This model was useful to me because it created a fourth slot that had to be filled, and it was obvious that engineering went into that slot, but most discussions about art/craft/science omit engineering, mostly I think because the word has a different feel than art, craft and science.

Don’t get hung up on the names assigned to the two dimensions of this model; they’re just the best I’ve thought of so far.

The practical vs not practical dimension is really about whether the result of the practice of this “discipline” (and I think they all do deserve to be called disciplines) has intrinisic value or its value derives from its use.

The subjective vs objective dimension is about whether the experience is personal and individual or whether it is “universal”. These words are problematic because we glibly talk about the “universal truth” or “shared meaning” of great art, but the key idea here is that this shared meaning is emotional, and thus ultimately personal, not cognitive. We can ascribe cognitive aspects to it, but these are secondary; the immediate experience is emotional and personal. We “like” it or we don’t like it. Its “truth” is personal.

The other thing is that the personal nature of the expression of art or craft means that the role of a unique personal style has a degree of importance that is not the case for science and engineering. While unique contributions are important to science and engineering, so is confirmation of their universality by dupiication of the results. Indeed, it is that confirmation that affirms the “value” of the contribution. And it is the uniqueness of the contribution itself, not the “style” of the contribution, that matters, even though we often refer to the particular “style” characteristic of a scientist or engineer’s work.

So, what does all this have to do with EA?

To be determined.

len.

Len: all of this sounds very much like an old model of mine that I blogged about a few months back: see ‘Sensemaking and the swamp-metaphor‘ and ‘Sensemaking – modes and disciplines‘. It’s not quite the same – your ‘practical/not-practical’ puts a somewhat different twist on it – but close enough that we could perhaps do some useful comparisons and cross-maps there.

Your comments, perhaps?

Yes. My immediate thought is to make integrate our two 2 x 2 planar maps into a 2 x 2 x 2 cube and see what happens…

len.

“2 x 2 x 2 cube”: first guess is that it’d be kinda interesting to cross-map it to Boisot’s ‘i-space’ http://www.ispaceinstitute.com/framework/framework.html – we might well find ourselves with a similar kind of pathway. (Personally I’m not that happy with i-space – I think it’s too constrained, and even misleading in places – but I know that others place high store by it.)

Either way, it would usefully extend the same metaphor about real-world contexts always having some elements ‘in’ every part of the context-space map.

I left out an important concept in the discussion of the “subjective” aspect, and that is the role of aesthetics.

Again, we often talk about the “beauty” of certain theories, and we typically prefer a “pretty” concept to an “ugly” concept, but the role of aesthetics in the evaluation of objective truth is secondary, while it is often central to the evaluation of subjective “truth”.

len.

Len: Very, very strong agree with you here about aesthetics and suchlike. When I explored effectiveness in relation to the Five Elements (in essence, a fully-cyclic version of Tuckman’s ‘Forming / Storming ‘ Norming / …’ sequence), I labelled the cross-map to the ‘People’ (i.e. subjective) phase as ‘Elegant’, in the same scientific sense of ‘elegance’ that you describe.

Tom, I’ve been going round in circles about responding to this – partly because I’ve in parallel been writing something myself, which I wanted to concentrate on first. It has a significant overlap with what I understand to be the underlying discussion here. And partly because I don’t want to get involved here in a discussion about what exactly we mean by art, craft and science (and now engineering too).

I think that discipline and rigour are much needed in enterprise architecture. I don’t regard those as restricting the thinker to the purely rational. Intuition is equally a part of this. Einstein said that, which in itself is good enough for me but here’s a nice quote from Thomas Lewis, Fari Amini, and Richard Lannon, A General Theory of Love. I pulled it off of Ruth Malan’s Trace and she got from Maria Popova.

“Science is an inherent contradiction — systematic wonder — applied to the natural world. In its mundane form, the methodical instinct prevails and the result, an orderly procession of papers, advances the perimeter of knowledge, step by laborious step. Great scientific minds partake of that daily discipline and can also suspend it, yielding to the sheer love of allowing the mental engine to spin free. And then Einstein imagines himself riding a light beam, Kekule formulates the structure of benzene in a dream, and Fleming’s eye travels past the annoying mold on his glassware to the clear ring surrounding it — a lucid halo in a dish otherwise opaque with bacteria — and penicillin is born.”

What you refer to as scale requires us to have a disciplined and rigourous approach. In EA this can be provided (or at least assisted) by the use of one or other smart framework. A smart framework is one that helps us direct our thoughts, allows us to exercise our intuition, provides a place into which to anchor the results of our intuitive processes and gives us a checklist (in the Tom Graves sense of the word) to keep us honest. I’m sitting on the fence here about what frameworks those might be. That’s another discussion but I will say that these pages are not a bad place to start looking.

That’s what I wanted to say. That blog of mine should be out in a few days. I believe it supports what you’re saying, Tom but in a somewhat different manner.

Stuart’s observations prompt me to cite one of my favorite papers, David Parnas’s “A Rational Design Process: How and Why to Fake It”. The thing I like about this paper is the insight that the process by which we arrive at a design and the way we ultimately express that design and its rationale (and thus, ostensibly, the way it was derived) are two different, and separate, things. The process of design is rarely “rational”, but a great design has a post facto rationale that is compelling in its simplicity and inevitability.

The two by two matrix I proposed (and probably Tom’s two by two matrix as well) is just such a post facto rationale — not in the sense that it is a “great design”, but in the sense that it describes the end results of these disciplines, not the means by whch they achieve these results. I won’t even pretend that it represents anything useful about how art, craft, science and engineeering are actually practiced.

len.

We can perhaps learn something from the flow from DNA (EA?)-> RNA -> proteins -> phenotypes to the extended phenotype (all effects that a gene has on its environment, inside or outside of the body of the individual organism)

Source: http://www.youtube.com/watch?v=W4mYwsr9gGE

Len and others

The design process and the output – this prompts something I will always remember from Edward de Bono’s I am right, you are wrong –

Solving a problem can be hard work – when we find the solution, looking back it often looks simple – that is the nature of learning.

This provides hints to learning – starting from the solution and working back to the problem can be more easily understood sometimes!