Ensuring that the Simple stays simple

What happens when the simple definitions of Simple and Complex become complex? Do they become so Complicated that they can collapse into the Chaotic? And if so, what can we do about it?

This one’s triggered in part by a swathe of complaints from various enterprise-architecture folks about a certain ‘standard definition’ of Complex, and also in part by a double-Tweet by Bruce Waltuck:

- complexified: @tetradian @davegray @Jabaldaia the 1st challenge is to understand the difference between complicated & complex. // 2d, understand different patterns of inquiry & response in each domain (complicated vs complex)

Sounds straightforward, doesn’t it? – define some differences, define the differences of methods that apply in each case, do it, all done. Easy: no sweat!

The reality ain’t quite that simple, though…

[…as I know Bruce knows well, of course – hence ‘understand’, not ‘define’, in that Tweet above.]

To see why it ain’t so straightforward, let’s first take the usual definitions from what, for safety’s sake, we’d best call ‘the SCCC-categorisation‘:

— Simple: rule-based, predictable, direct cause/effect relationships; use categories to guide response

— Complicated: rule-based and ultimately predictable but often indirect cause/effect relationships, sometimes with feedback-loops; use analysis and algorithms to guide response

— Complex: meaning emerges from and interacts with context, ’cause/effect’ can be misleading; use patterns, guidelines and ‘seeding’ to guide response

— Chaotic: ‘anything goes’, no discernable ’cause’ or ‘effect’; use action to break out to another domain (usually Simple or Complex)

Yet in reality, judging from some of those complaints, what we actually have is something more like this:

I don’t find rules simple at all, to me they’re crazily complicated, or more like what you’re calling complex. To me, guidelines are much simpler to work with. And what’s the difference between ‘complicated’ and ‘complex’? – complex is just complicated that we haven’t yet worked out how to control, isn’t it? My whole job is about getting rid of complexity, and here you say it’s something we want? – that’s daft! And the service-management guys, all their work is about working with the chaos, not running away from it, so your definition don’t make no sense there neither.

Yeah, my head hurts too, trying to make sense of that… but it gets worse for a while yet… sorry…

What’s going on is that, to use those definitions above, definitions are a Simple tactic imposed on top of a Complex context, resulting in the would-be Simple becoming so Complicated with exceptions and uncertainties that it risks ending up as Chaotic. So to make it Simple, we have to accept that the Simple here is in fact Complex, and hence provide Simple rules to support a Complex anchor that allows for an emergent definition that is experienced as Simple by all those involved in that Complex social context. We avoid it becoming becoming too Complicated by accepting that it is Complex; and we use the uncertainty of the Chaotic to provide the source-experiences that ultimately make it Simple.

Simple, yes? 🙂

Uh, no…?

Okay, so let’s do a quick SCAN on this?

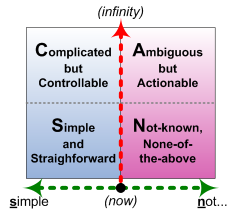

As a reminder, here’s the SCAN core-graphic again:

And the Inverse-Einstein test, which determines where we ‘are’ on the horizontal axis:

- if we do the same thing and get the same results, we’re ‘in control’ (whatever that means in the context) – which places us on the left-hand side of the graphic

- if we do the same thing and get different results, we’re not ‘in control’ – which places us on the right-hand side

A reminder, too, that the vertical axis is about ‘time until decision’: the less time we have, the more we’re forced either to a Simple form of ‘control’, or to work with the ‘Not-known’ as it is.

So: a SCAN on definitions and suchlike.

Definitions are good. They are – or should be – Simple, straightforward tools that allow us to make sense and make decisions at high speed.

The catch is that every definition is a Simple abstraction from what happens in the real-world. We choose to define it this way, to support this purpose. It’s true-for-a-given-for-a-given-value-of-‘true’, so to speak: one person’s subjective-truth, used ‘as if’ it’s ‘objective-truth’ – which it isn’t. And it’s important we don’t forget that fact…

Definitions may be Simple and unambiguous for each person, for use in one specific context; but they’re highly Ambiguous between different people, and different contexts. So they’re both Simple and Ambiguous – all at the same time.

If we try to use a definition as if it’s only Simple – applies to everyone, everywhere, for everything – and we try to use it for control at business-speed, what we’ll get is lots and lots of arguments, about whose definition is the right one for the context. In other words, by ignoring the Ambiguous, it suddenly gets very Complicated – and thence, when people don’t know which definition is ‘the correct one’, but don’t have time to work things out, a collapse down into ‘Not-known’. Otherwise known as the wrong side of chaos…

So we speed things up by acknowledging the Ambiguity. We ask people what they each understand and use as their ‘the definition’ for that context. If there’s time available, we make a point of exploring those definitions in an explicitly Ambiguous form, to entice a collective definition to emerge from those differences. If we don’t have time to do that, we can impose a definition and still respect the Ambiguity by contextualising the definition, such as “For this purpose, we assert that the definition of… is…” – acknowledging that other definitions are possible, but this is the one that we’ll use.

We treat that definition as part of a Simple checklist, a ‘rule’: we can say “Do it this way, because we know that it works if we do it this way”. And we couple that later with another Simple check such as an After Action Review to verify that it actually does give the same results – the Inverse-Einstein test – and, if not, decide what to do about it. In other words, Simple tactics that work with the Ambiguous, rather than trying to pretend that it doesn’t exist.

Or, in the SCCC terms, a Simple way to deal with the fact that the Simple isn’t Simple. 🙂

So to make sense of the Complicated versus the Complex (in SCCC terms again), the first thing we need to do is acknowledge that the definitions aren’t as Simple as they might seem. Once we’ve addressed that, we then have a chance to develop a shared understanding of what each might mean in practice, and how to use that shared-understanding to support decision-making.

Just a quick SCAN there, anyway, to explore something that’s turned out to be surprisingly important in practice.

Hope it’s been useful: let me know, perhaps?

Tom. Yes useful. Trying briefly to express the same thoughts slightly differently: a definition is useful to the extent that it helps us understand what an author means by the use of some term or concept. A useful definition can’t be exhaustive, in the sense that it won’t tell us everything the author wants to say about the concept. Other techniques such as ontology can be useful for this. So can straight descriptive text. Depends how “precise” the author wants to be, which in turn will depend on the use to which s/he wants to put the concept.

A definition cannot in general be the single source of truth, because, as you say, other people may have different, perfectly reasonable definitions of the same term/concept. This is particularly the case, when we are using common words (e.g. simple, complicated) in a specific context.

For me it’s generally sufficient that I should understand what the author is trying to say (and have no violent disagreement with it). We can then get on with the substantive discussion.

To the extent that you can get people to discuss their varying definitions, you may (again as you say) obtain a better view on the degree of ambiguity. What you do with your measure of ambiguity is a whole other discussion, I think. Getting people to converge on a common definition might be one result but too often that results in a poor compromise. More usefully you might achieve a common approach to the factors underlying the different definitions, which you can then take into account in the substantive discussion.

So in the case of the current discussion, if we all understand what you mean by Simple and why not everyone is happy with your definition, we can then discuss what makes things simple (or not) and how we proceed to deal with those things in real life situations (e.g. EA). Which is much more interesting than the definition per se.

Stuart – many thanks, and all good points – agreed.

On “Getting people to converge on a common definition might be one result but too often that results in a poor compromise” – yes, a very real risk. One way round that is to emphasise the point about “for this purpose we’ll use the definition of…” – that also allows to focus more on the purpose than on disagreements about definitions. As you say, “much more interesting than the definition per se”.

Thanks again, anyway. 🙂

Let’s say we have a system, S1 and S2 that both accomplish a set of goals, G. And let’s say that S1 is difficult to understand, build and maintain. Let’s say that S2 is much easier to understand, build, and maintain. There must be something different about S1 and S2, since they both accomplish G, yet one is much more problematic than the other.

I propose that we examine S1 and S2 closely and try to isolate some attribute, let’s call it X, that has apparently infected S1 and not infected S2.

If we assume that there exists such an X, then it would be nice to be able to measure it and discover how to build systems that are immune from X.

The next question is, what shall we call X? I’m open to suggestions. In my writings, I have called X complexity.

Now assuming that I am right, and that X exists, is measurable, and can be eliminated (as we seem to have done in S2), then for convenience, it would be helpful it we could describe the lack of X, or ~X (NOT X). Again, I’m open to suggestions. In my writings, I have called ~X “simplicity.”

Further, it would be convenient to be able to describe a system that has the least possible X and still meets G. I call such a system “simple.”

It would also be convenient to be able to describe a system that has more than this minimum value of X while still meeting G. In other words, a system that is not “simple.” I call such a system “complex.”

One advantage of this perspective is that simple and complex have a mathematical relationship with each another.

My concern about your four quadrants is that I can’t see any mathematical relationships between greater and lower values. For example, you have “simple.” As “simple” becomes less and less simple, we appear to move into “none of the above.” But clearly, by whatever definition of “simple” you might use, “none of the above” is not the opposite of simple. Nor, do I think, is “complicated,” which seems to refer to a state of mind rather than a state of being.

Some of my confusion may be because I don’t know what (if anything) is being measured on your horizontal and vertical axis.

My own take on this is to have a horizontal axis measuring complexity and a vertical axis measuring alignment to the business problem (i.e. delivering G.) Then the “quads” from upper left and moving clockwise, are vital, stagnant, chaotic, and simplistic. I put quads in quotes, because they aren’t quarters of a box, they are vertical and horizontal dissections of a tetragon. The advantage of this approach is that both axis are, at least in theory, measurable. And furthermore, that movement “up” is mathematically the opposite of movement “down” and movement to the “right” is mathematically the opposite of movement to the “left.”

While one need not buy into my measurement system, it seems to me useful if right/left have some relationship, as should up/down. I don’t see that in your system.

It would be interesting sometime for the two of us to have a discussion and see if there is a possible unification of our perspectives.

If you are interested in my “quads” you can find them at http://simplearchitectures.blogspot.com/2011/10/sip-complexity-model.html

Roger – oh what a joy to spar with you! 🙂

I’ll do this in two parts, because the first needs to be short and, I hope, sweet…?

You’ll probably recognise that I, uh, included a version of what I understand as your position in the ‘complaints’ pseudo-quote above. And what that ‘complaints section’ really means is…

(I hope you’re rubbing your hands together in glee at this point, ‘cos I’m gonna say it in nice big capitals…)

I WAS WRONG!

(Sort-of. Mostly. Sorta-kinda. In my defence etc etc etc. But still… 🙂 )

What I was wrong about was pushing for a single definition – especially a single definition of ‘Complex’. Saying that that definition was ‘right’, and all others were ‘wrong’. Is true that that ‘official’ definition of ‘complex’ is useful, for specific purposes – but that doesn’t make it ‘the truth’ etc. Way way way too simple. Oops… Sorry.

From here on in – looking at the detail of your comment and so on – it gets a bit more messy, a fair bit more technical, and probably a lot of fun too. But I wanted to get that part out of the way first. 🙂

Roger – thanks again: now for the more technical bit of the reply.

To my eyes, what you have here is a fairly subtle example of circular reasoning – for which the clue is the repeated use of the word “assuming”.

What you’ve described for the S2 context are a whole stream of constraints: for example, an arbitrary assertion that the attribute in question is identifiable, measurable, eliminatable, and describable in terms of mathematical relationships. You’ve then – entirely arbitrarily – assumed that because these constraints apply to S2, they must therefore apply to S1. And having given that assumption (yet kinda also immediately forgotten that it is only an assumption), gosh, lo and behold, everything that you’ve done for S2 – where it does seem to work – must therefore also work in S1. Which would perhaps be valid if S1 were indeed identical to S2. But you don’t know that that’s true. In fact you have no way of telling if or if not it is true, because the constraints filter out anything that would enable you to see it. Which is where this gets to be interestingly t-r-i-c-k-y…

So, by way of reply:

— what happens if the attribute isn’t eliminatable?

— what happens if the attribute isn’t measurable?

— what happens if the atribute is only pseudo-measurable? – we can get a metric from it, but it isn’t stable or repeatable (such as because of hidden factors or self-reflexive loops)

— what happens if the attribute isn’t even identifiable? – or at best polymorphic?

‘Cos that’s the kind of attributes I’m usually dealing with in ‘my’ S1-type context – so-called ‘soft-systems’ and the like, or inherent-uniqueness.

I’ll leave you to brew on that one for a while, and come back for the moment to the axes and the ‘quads’ in SCAN.

The horizontal axis is a possibly-linear scale of complexity (in your sense of ‘complexity’, actually) of the ‘truthiness’ of choice. (More technically, it’s the degree of modality in the decision.) There is a clear boundary between true/false or ‘digital’-logic, which only has single-level modality (0..1), versus any other form (0..n) of ‘logic of possibility or necessity’. In terms of our own understanding, it’s very straightforward, it fits well with common concepts of ‘control’, and also aligns well with standard IT-type computational concepts of ‘provability’ or ‘truth’. The moment we move outside of that simple 0..1 true/false, things get… well… messy, really. We either don’t know, or aren’t sure, or don’t know that we don’t know, or it’s “yes, sometimes, but not others, sorta-kinda, know what I mean, like…?” – that kind of messy, where the whole point is that things don’t make sense. Hence sensemaking.

The vertical axis is just ‘time-available-to-decide’. If there’s a fair bit of time, we time to work things out. If they fit with a 0..1 logic – in other words ‘controllable’ – we should be able to find out your identifiable, measurable and, where possible, eliminatable attributes, as in your S2 system. If they don’t fit with that 0..1 constraint – i.e. where the modality is >1 – then by definition it’s going to be somewhat ambiguous: given time, we should be able to get it actionable, but not ‘controllable’ in your S2 sense. Over time we might be able to eliminate some of the ambiguity, but there are plenty of real-world contexts – radioactive fission, for example – that by definition will always remain somewhat uncertain: so we have to accept that not every system will fit your S2 constraints.

On the vertical axis, as time-available trends towards zero – i.e. the moment of action – we get squeezed down to very much more constrained options. Eventually we have to decide, on the basis of whatever choice-logic we have available. Hence the Simple ‘quad’ is a true/false choice, and in principle should be repeatable (as per your S2-type system); the ‘None-of-the-above’ ‘quad’ is a modal-logic choice, and probably is not repeatable (as per many of the S1-type systems I deal with).

Note that this doesn’t assume a definition for ‘Simple’: simple is what people experience as ‘simple’.

We then have another real fun sort-of-axis that passes through all of the quads in the S-C-A-N sequence, and that represents a scale of repeatability (again, a contextual definition of ‘repeatability’), from highest at the peak of Simple to completely unique at the peak of ‘None-of-the-above’.

In that sense, all of the axes have explicit metrics:

– horizontal: modality (with left-right boundary at modality=1, but extending indefinitely to the right)

– vertical: time-available-until-decision (from ‘NOW!’ potentially to infinity)

– curve: repeatability (absolute-repeat at lower-left to partial-repeat at mid-upper to zero-repeat at lower-right)

The complication on all of those is that the results and choices are still contextual: IT and machines are classically (though not always) constrained to true/false logic, whereas some people experience ‘simple’ rules as ‘complex’ (Ambiguous) and complex rules (patterns, guidelines) as ‘Simple’.

That’s the overall summary of the axes and metrics, anyway.

Now: my turn to go look at your axes and ‘quads’ (which look interesting and useful, certainly). And your turn to tear all of the above to shreds. 🙂

Thanks again: is real fun to spar in this way!

To be honest, I didn’t recognize my position in the complaints, but what the heck, since you offered to buy me a beer, I’ll accept. You did offer to buy me a beer, didn’t you?

Roger – beer? Yeah, sure… not quite sure how I’s gonna get it to you, though… Don’t think I can send it through the mail… comin’ over to London somewhen soon is you? 🙂