Control, complexity and chaos

Just how restricting – 0r even dangerous – is our seeming ‘need’ for certainty?

Over the past couple of weeks I’ve been exploring links between the SCAN sensemaking / decision-making framework and Cynthia Kurtz’s more socially-oriented Confluence sensemaking-framework. I still have some way to go on that, but in the meantime one of the themes that’s been coming up a lot in relation to that – and which forms a backdrop to both sensemaking-frameworks – is the challenge of control: the overlay of ‘order’ on natural unorder, the assertion of certainty over real-world uncertainty.

At first glance that might seem pointlessly abstract. But as futurist Marcus Barber commented, during a Twitter conversation on this theme:

- RT @rightfuture: Degree to which leaders are effective is in direct INVERSE proportion to their preference for certainty.

Or, to put it another way round, we are effective only to the extent that we’re willing to work with uncertainty.

In short, this isn’t abstract at all: it’s a fundamental issue for enterprise-architecture, business-architecture, economics, politics, education and much else besides. However uncomfortable it may feel, it’s something we need to explore if we want our organisations and institutions to be effective at what they do – and at what we do, too.

So, here’s my own current take on this.

First, a simple, straightforward assertion: life is uncertain. There’s no shortage of formal proofs for that, all the way from sub-atomic scales to cosmological ones, and pretty much everywhere in between. And as for that old saw that “the only certainties in life are death and taxes”, it’s all too clear that taxes can be avoided in many different ways, and a fair few possibilities to deal with death as well. Wherever we look, reality is uncertain.

So where does certainty come from? The short answer is that things that seem certain are a selected subset of the uncertain. For example, there are some things that do seem certain: the speed of light, the force of gravity, the fine-structure constant, or the Feigenbaum constants, to name just a few. Yet as soon as we put even these supposed ‘constants’ together in any real-world context, the result is not so certain: for example, the effective speed of light can vary as a result of gravitational distortion of space-time. Certainty is the outcome of a filter that we apply to the uncertainty of the real world: and it’s really, really important that we never forget that it’s only a filter – not reality itself.

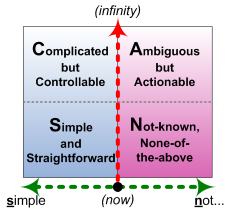

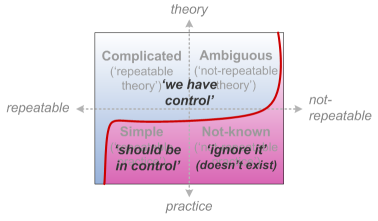

To put this into visual form, and to bring it down to a much more mundane level, let’s use the SCAN frame:

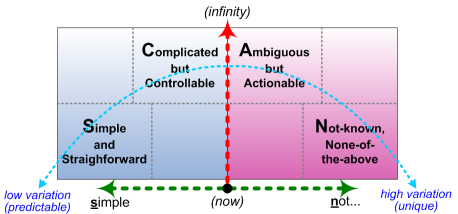

Or, for perhaps a bit more clarity, the SCAN frame crossmapped to a spectrum of perceived-variation:

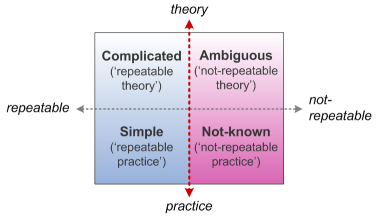

The vertical-axis of the base-frame is time-available-before-action, which in this context could be summarised as ‘degree of abstraction’, with most-concrete – right here right now – as the baseline, and more-abstract extending ‘upward’ towards infinity. The dotted-line not-quite-boundary on that axis represents the real shift of tactics that has to occur at some point as we move ‘downward’ towards the real-world: the same distinction between theory [abstract] and practice [concrete] as illustrated by the old quip that “in theory there’s no difference between theory and practice; in practice, there is”.

The horizontal-axis is a spectrum of modality: the logic of possibility, probability and necessity. Or, to put in the language of requirements-documents, a spectrum from ‘shall’ to ‘should’ to ‘could’ – which, if we’re not careful, can too easily become a spectrum of wishful-thinking… The red-line boundary along this spectrum is what I’ve nicknamed ’Inverse-Einstein test‘: if we do the same thing, then to the left of that boundary we (expect to) get the same result, and to the right of it we (probably) don’t.

A key point here is that perfect repeatability – perfect certainty – only occurs at the very left-hand edge of the frame, where everything is exactly the same, every time, both in theory and in practice. Which doesn’t often (or ever?) occur in real-world practice: effective-repeatability is the ability to adapt to variation and still get the same result – which is not the same as repeatability itself.

Over on that left-side, driving effective-repeatability, there are two factors we need to address:

- the variety in the context – the number of variables, of the interactions between them, and the overall number or range of possible ‘states’ that can arise from this

- the ‘variety-weather‘ in the context – the variety of the variety itself, in terms of changes in numbers or nature of variables, and their interactions and possible ‘states’, and the rate and nature of that change

The scope of variety is what we might call ‘complicatedness‘. The ‘Complicated’ domain is all about the (theory of) ability to adapt to the real number of variables in a context. As long as things do conform to linear-paradigm expectations of causality, repeatability and linearity, we can calculate exactly what the next action towards a desired point of control would be.

And given modern technology, it might seem that we can cope with almost any level of complication, any number of variables, and any amount of feedback and delays and other linear-interactions between those variables. So for some people, ‘complexity’ is solely a synonym for ‘complicatedness that we have quite resolved yet’, on our way towards the perfection of absolute control, absolute certainty.

But there’s a catch – or two of them, rather, which also happen to align with the SCAN axes:

- time (vertical-axis, towards the ‘Now’): it takes time to sense and calculate each variable and the relationships between each pair of variables – and the universe often won’t wait while we do this

- ambiguity (horizontal-axis, towards high-variation): the real-world includes randomness, non-linearity and ‘wicked problems‘ – for which linear-paradigm assumptions are often worse than useless

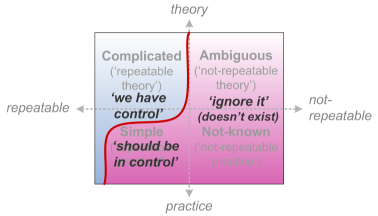

In essence, ‘control‘ is an abstract ideal that can only ever exist (if that’s the right word) somewhere way out towards infinity on the upper-left of the SCAN frame. By contrast, the closer we get to real-time action, and the more we face up to the real uncertainties of the real-world, the less realistic that ideal of ‘control’ will become.

Unfortunately, that ideal of ‘control’ is very, very desirable, to a very large number of people. So huge problems can easily arise from this – especially in business, where not only are most managers still trained in the Taylorist linear-paradigm story of ‘scientific management’, but they also for the most part live and work in a literally-imaginary world of filtered-information, where the rough edges and uncertainties have all been smoothed away, and things can look a lot more certain than they really are. As a result we get a kind of skewed Inverse-Einstein boundary, where things look more repeatable in theory than they are in practice:

In principle, ‘complexity‘ focusses on the (theory of) ability to adapt to variety-of-variety – inherent-uncertainty, non-linearity, wicked-problems and suchlike. But the catch is that it’s often ‘sold’ as if it’s just another variant on the complicated – pattern as predictable algorithm, probability as certainty, and so on.

This especially occurs whenever the word ‘science’ is introduced into the picture – with long out-of-date notions of ‘science’ being (mis)used to provide a gloss of certainty where, by definition, none actually exists. As long as it can be held somewhat in the abstract, away from real-time action where inherent-uncertainty is an unavoidable fact, it’s possible to prop up a comforting – and, for its proponents, very profitable – delusion that even extreme-ambiguity can somehow be crowbarred into some form of ‘control’ via an often-spurious misframing of ‘the science of complexity’. As a result, we end up up a fully-skewed Inverse-Einstein boundary, where those who deal only in the abstract and the imaginary can fool themselves into believing that their whole world ‘should’ be under their control, because it’s all ‘scientific’:

But the reality is that there’s real science, and there’s pseudo-science: and to be blunt, much of what purports to be ‘complexity-science’ falls firmly into the latter category. For example, circular-reasoning, systematic evasion of the subjective, selective ‘fudging of the facts’, and misframing of probabilities and patterns as pseudo-absolutes, are all endemic in those so-called ‘sciences’ – especially in their purported application in business.

[The big-consultancies seem especially notorious for this, but certain ‘big-name’ consultants are probably the worst offenders of the lot: best not to name names here, of course, though by now most people in ‘the trade’ would know who they are.]

To make things worse, their so-called ‘best practices’ and suchlike frequently confuse content and context – scrambling the context-specific relationships between methods, mechanics and approaches upon which any context-viable resolution would depend. The end-result is chaos, in the colloquial sense – for which the purported ‘cure’ is more of the same pseudo-science. It doesn’t help…

I’ve explored ‘chaos‘ in some depth in another recent post, with an emphasis on four distinct forms of chaos:

- ‘chaos’ in the colloquial sense, as a state within which no sense can be made – and hence something that many people would want to prevent

- ‘chaos’ as the ‘necessary jolt’ – a brief burst to shake things up when they’ve gotten stuck

- ‘chaos’ as non-linear dynamics – a subset of or view that can be described by ‘chaos-mathematics’

- ‘chaos’ as infinite-possibility – a source for innovation, improvisation and the like

The fundamental here is that chaos is about the unique, about inherent-uncertainty – especially in real-time contexts in the real-world. Some aspects of the world are (relatively) predictable; yet many are not, and it’s the requirement to deal with that reality that drives much of my own work in enterprise-architecture and the like.

Most people, most places, most contexts, will share many similarities with others: that fact is important, and very useful in business and elsewhere. Yet also everyone is different, themselves, unique; everywhere is different, with its own local distinctiveness; every context is different, each with its own subtleties that render so-called ‘best practice’ so problematic in practice.

- In medicine, what works with one patient may not work with another: yet we need to know what to prescribe for this patient, right here, right now. (The same applies in sales, in customer-service, and in many other human-oriented areas.)

- In architecture, in town-planning, in infrastructure-design, what works in one place may well not work at another place, or even at another time: yet we need to know what to do with this place, right here, right now.

- A new idea may go far with one person, in ‘the right time at the right time’; yet fizzle out to nothing with someone else, or somewhere else, or somewhen else, or any combination of those.

- A storm or earthquake or bushfire may have little impact if it strikes at one place, yet enormous impact if it strikes at another, or at a different time: yet we need somehow to plan for any possible disaster, at any possible place – even though all of them are different.

That’s ‘chaos’ in the sense that I mean it here: working with the inherent-uniqueness of each context.

Just as with complexity, there are some forms of science that can be used here, but it’s really important to use them as sciences of chaos, and not as pseudo-sciences of complicatedness or complexity. For example, chaos-math does not make anything any more predictable: at best it can be very precise about the unpredictability. Likewise describing chaos solely as an input to complexity might arguably be valid in its own way, but kind of misses the point: there’s a lot more to functional-chaos, than just that one aspect of the story. And since by definition there are no patterns to the chaos-elements in a context, trying to use complexity-concepts as if they’re ‘The Answer To Everything’ – as some people do – can be dangerously misleading.

Chaos in this sense is also not the same as random, although randomness can indeed play a part within it. For example, we can identify a nuclear half-life often with extreme accuracy, but each fission itself is, technically speaking, exactly random – and yet sometimes we may need to know exactly when and where this atom will split, because that in itself may be the key to a chain-reaction. (The natural fission-reactors at Oklo in Gabon provide a real-world example of this.) Every coin-toss is (in principle) exactly random – and yet a great deal may depend on the actual outcome of each single one of those. Although some form of randomness may underpin functional-chaos, randomness alone is often not all that important: it’s the relationship between that randomness and the practical implications of each random-event that matter here.

As a quick summary, colloquial-chaos occurs whenever:

- we try to apply certainty (‘control’) to inherent-uncertainty

- we try to apply patterns or heuristics (‘complexity’) to inherent-uniqueness

Or both together, of course.

By contrast, functional-chaos occurs when we work with uncertainty and/or uniqueness – rather than fighting against it, or pretending that it doesn’t exist.

And to me, that’s a fundamental that’s still far too rarely addressed in enterprise-architecture and elsewhere. A lot more work needed on this, I think?

It pisses me off when climate scientist explain that if we cut emissions by X % the warming will stay at Z degrees. I don’t doubt that there is a connection but the certainty of the exact reaction at the accuracy of one degree Celsius is amazing.

Also annoying was when our Cabinet seemed to know exactly what follows if we support or not support Greece (or the stupid banks which had lent the money). But I suppose the politicians know that they must feed people only absolutely certain solutions 😉