Efficient versus effective

What is ‘efficiency’? – in particular, ‘efficiency’ in any system that’s subject to real-world variances?

Starting-point for this one was yet another passing item in my Twitterstream:

- RT @MentalHealthCop: If providers are contractually obliged to run hospitals at 98% capacity, is it any wonder that the implications of s140 are ignored?

‘MentalHealthCop‘ is Inspector Michael Brown, a serving police-officer in the Midlands in England, who focuses on mental-health issues that front-line police have to deal with on an everyday basis:

[Brown] refers to [police] officers as “street-corner psychiatrists”, who are often first at a scene when a person might need expert medical attention from mental health professionals.

In much the same way that the irrepressible Simon Guilfoyle writes a hugely-insightful blog on systems-thinking in the police context, Brown writes an award-winning blog on mental-health issues in the police context – which, equally clearly, is about the clash between Taylorist-style myths of ‘control’ and certainty, versus the much messier reality out there on the streets. Which really comes through in that tweet above…

I’d guessed from the context that ‘s140‘ in the tweet above referred to Section 140 of the [UK] Mental Health Act – a guess that turned out to be correct, as indicated by a post on ‘Section 140 Mental Health Act‘ on Brown’s blog. It’s kinda sobering reading, of course – most of us don’t have to deal with this kind of mess in our daily lives, though it’s good to see (as in this example) that at least someone is willing to do so.

Yet not, it seems, the classic Taylorist-style managers who seem to infect everything these days, with their seemingly ever-decreasing grasp of how real-world systems actually work. As Brown wryly comments:

I still really enjoy the example of a MH trust [mental-health services-provider] who decommissioned beds to save £1.4m and subsequently had to spend £4.1m on out of area and private placements.

Which brings us back to that point about ‘efficiency’.

Taylorists and other ‘control-freaks’ obsess about ‘efficiency’ – but using a linear-only model of efficiency, not a whole-of-system model. The result is a proliferation of point-solutions that at first glance can seem ‘efficient’ within the immediate local context – we save money by closing down a ward – but turn out to be ludicrously inefficient, as measured in real terms, because the actual need (a legally-mandated requirement, in this case) can no longer be met by the supposedly ‘more-efficient’ system.

Which brings us to the notion of requisite-inefficiency (a theme championed recently in the enterprise-architecture space by Ivo Velitchkov, if I remember correctly?). The point here is that, to be effective in terms of the specified real-world need, a viable system will require a certain level of ‘inefficiency’. Factors behind this ‘requisite-inefficiency’ will include:

- variance in system-load: implies ‘inefficiency’ both in terms of capacity held in reserve for peaks above the average/mean load, and in terms of ‘spare’ capacity for troughs below the average/mean load

- duration of ability to run at continuous full load: in most engineering, a system that is run at continuous full-load will tend to have a shorter useful-life and/or be more prone to breakdown than a system that has the same peak-load capability but is usually run at lower load

- whole-of-system effects: the well-known trade-off between local ‘efficiency’ versus whole-of-system efficiency

Which should tell us that neither whoever-it-was that specified a ‘run at 98% capacity’ contractual-requirement, nor whoever-it-was that signed that contract, had much if any clue of how hospitals and other emergency-systems actually work…

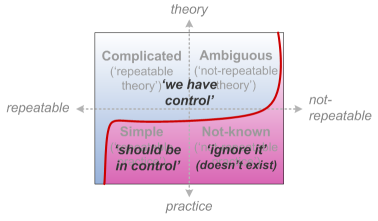

As usual, I’ll throw a SCAN diagram into the mix here:

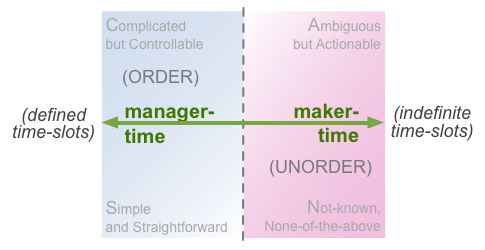

For any real-world context, ‘efficiency’ is, in essence, a Taylorist myth, arising out of what we might describe as a manager’s belief that time and resources could or ‘should’ be perfectly-predictable or ‘controllable’ entities. By definition, the real-world covers the whole of the context-space, the entirety of the SCAN frame. But those who live in the imaginary-world of ‘manager-time’ see only the parts of it that fit the Taylorist myth of ‘control’ – everything else is either ‘Someone Else’s Problem’, or is deemed not to exist because it upsets the comforting certainties of ‘the perfect world’.

In essence, that ‘98% capacity’ contract assumes that the system overall, by definition, will never experience more than 2% variation in its load, and that it can be run continuously, at all times, at no less than 98% of maximum load. In SCAN terms, it also asserts that the ‘boundary of effective certainty’ actually experienced within the system is way over to the right-hand side, and in which – in theory, at least – uncertainty and unknowability has been reduced to almost negligible levels. The real-world ‘boundary of effective-certainty’, however, can be hugely different from the manager’s comforting fantasy – the kind of disparity in this SCAN context-map:

Myths of ‘efficiency’ drive the kind of ‘imaginary-world’ versus real-world skew that we see in that diagram above – the imaginary-world of theory (upper-blue) versus real-world complexity and uncertainty (lower-red).

More worryingly, such managers live in a world where they supposedly ‘must’ attempt to force the real-world to match the arbitrary assertions of the imaginary one – as we see from that absurd ‘98% capacity’ contract. In many cases, they gain personal bonuses to the extent that they ‘succeed’ in forcing the real-world to (seem to) fit the imaginary one, and/or they face personal penalties to the extent that they don’t ‘succeed’ in doing so. By definition, it’s not a constraint that’s likely to enable any kind of viability – or even sanity – for any of the players in the system, or in the overall system itself… as is painfully evident in the real-world ‘messes’ that Brown and Guilfoyle so often describe on their blogs.

Sigh…

Efficiency is a component of effectiveness, but can never – and must never – be used as a substitute for effectiveness. Perhaps the simplest way to summarise the difference would be this:

- efficiency is measured in terms of a predefined ‘solution’

- effectiveness is measured in terms of the actual need

As enterprise-architects and the like, all of us would know about the dangers of ‘solutioneering’ – those all-too-common attempts to posit a predefined ‘solution’ as itself the requirements for addressing a problem. In effect, the moment someone introduces a predefined ‘efficiency-metric’ into a context or a contract, they’re solutioneering. Don’t let them do it: it doesn’t work – not in the real-world.

Instead, we must identify the requisite-inefficiency in the overall system, and embed that in the architecture-requirements and suchlike.

Which means, first, we need to identify what the actual required outcomes are, the guiding-principles and so on, for the overall system – because that’s what tells us what ‘effectiveness’ actually is in that context.

Only then can we identify what options for ‘efficiency’ might exist – which, in any real-world human context, would never be as high as that putative ‘98% capacity’.

Above all, never, never, allow ‘efficiency’ to take priority over whole-of-context effectiveness.

Never. That point should be hammered home at every possible opportunity, and expressed in everything that we do in our enterprise-architectures.

And, perhaps, literally hammered onto the brow of those still-too-many politicians and managers who are so foolish as to assert otherwise…?

Bah.

Over to you for comment, anyway.

Tom,

It recently occurred to me that there is a something even more fundamental than either effectiveness and efficiency. It is convenience.

Think about it. If something is sufficiently convenient for someone, she will sacrifice large amounts of efficiency and effectiveness for the sake of such convenience. That’s why we as individuals and as communities are so wasteful. Look at “conveniences” like fast food, disposable anything, even convenience stores.

People generally aren’t all that interested in making their own lives more efficient or more effective. It’s typically managers who want the people they manage to be effective and efficient. People generally just want things to be easier for themselves, i.e., more convenient, even if it means being less efficient or effective.

Never underestimate the power of the path of least resistance. People prefer it to the most efficient path and the most effective path. The path of least resistance is actually quite a profound concept, underlying not only human behavior, but also evolution and physics. See also, principle of least effort and principle of least action.

https://en.wikipedia.org/wiki/Path_of_least_resistance

BTW, It’s tempting use “expediency” instead of “convenience”. Then we could talk about the 3 E’s: efficiency, effectiveness, and expediency. Unfortunately, the latter has negative connotations.

Great points, Nick – thanks!

The catch is that there’s so much that this brings up for me, and that I need to reply to, that I’ll do so in a separate post. Watch This Space?