Is culture-change the same as software-change?

Should we approach culture-change as if it’s the same as software-change? At a current conference, James Archer seemed to interpret Alex Osterwalder as saying just that:

- jamesarcher: Company culture can be methodically designed, built, and tested almost like a software product. #bos2015 @AlexOsterwalder

To which – probably no real surprise to people who know me – I kinda fulminated:

- tetradian: Oops… a really, really fundamental error, as wrong as the delusion of ‘engineering the enterprise’….

James Archer seemed somewhat dismissive of my retort:

- jamesarcher: I used to think the same, but have since had enough experience to watch it happen (and manage it myself as well).

I tried another tack to make the point:

- tetradian: a simple question: do you want your social-relations/purpose viewed as “like a software product”? – if not, consider again… // yes, there are some ‘designable’ elements: but if we think it’s something we can control, we’re deluding ourselves, dangerously

To which James Archer again came back, replying to the first of my two Tweets just above (“consider again…”):

- jamesarcher: if you were the CEO of a company with a dysfunction culture, would you not plan to shape it a different and intention way?

And to the second (“deluding ourselves, dangerously”):

- jamesarcher: I’d consider it more dangerous to think of culture as a dangerously untouchable part of our lives.

Both of which points are valid and true, of course – in their own way. But there’s a huge, huge ‘It depends…’ sitting in there – and if we miss how important it is, we’re likely to cause huge damage to the respective culture, without being able to understand what’s happened, or why. I summarised this in a quick Tweet, though recognising that that comment wasn’t enough on its own:

- tetradian: of course, but software is tame-problem, done to; culture is wild-problem, done with – v.different! will blog on this

Which, in turn, brings us here – where we can look at such matters beyond the max-140-chars constraint of Twitter…

To start this off, let’s make an assertion here: that pretty much by definition, culture-change demands that we address the whole context of that culture. There are a fair few ‘It depends…’ aspects to that, of course, but it’s probably close enough for now – particularly for what “the CEO of a company with a dysfunction[al] culture” would need to know before trying to change anything.

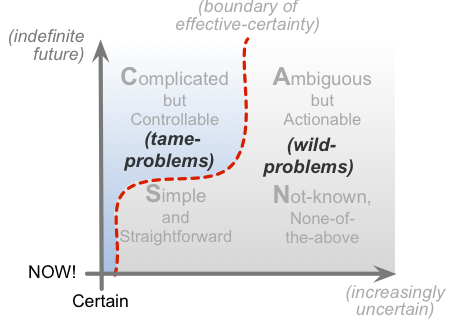

Given that, it’s probably simplest to set the scene a couple of SCAN cross-maps. The first summarises the types of tactics that we’d typically best apply to different ‘domains’ of the context:

And the other crossmap summarises whereabouts in a context we’d typically come across tame-problems versus wild-problems:

Some of that context – emphasis: some of that context – will indeed be amenable to tame-problem tactics: rules, regulations, predefined ‘solutions’ and suchlike. The idea with tame-problem tactics is that if we do the same things the same way with everyone, then we’ll get the same result from everyone too. And it does work – in some parts of the context. But not all – and that’s the point we need to note here.

In the parts of the context where wild-problem elements predominate, we cannot use tame-problem tactics. If we do attempt to do so, we’ll discover very quickly why the perhaps-more-common term for wild-problem is ‘wicked-problem‘ – because things can turn wicked on us very fast indeed. And the more we try to ‘control’ the culture, the worse it’ll get.

The key point to understand with all wild-problems is that they’re on the far side of the boundary of effective-certainty. Which means that if we do the same things, it’s possible, probable or even near-certainty that we’ll get different results each time. Or, conversely, that to get the same effective-result in each case, we might, may or must do things differently each time. Which, for the latter, means that we need to know what to do differently each time. Hence, as Claire Lew put it, in that same Twitterstream:

- cjlew23: If you want your company culture to be a certain way, figure out which behaviors you are enabling – and blocking. @AlexOsterwalder #BoS2015

And just to make it even harder, it’s often very different in practice than it might at first seem in theory – as that skew in the tame/wild diagram above suggests. Which is why this gets kinda tricky…

Which brings us back to the question of whether culture-change is the same as software-change. Because a lot of the answer to that depends as much on how we understand software-change as on how we understand culture.

If we think of software-development as just programming a machine to do the same thing over and over, in a supposedly-‘logical’ way, then yeah, we’re likely to approach culture-change in much the same manner, too – viewing the organisation and the people within it as programmable, predictable, controllable elements within a machine. That’s pretty much the Taylorist dream: programmable people, piling up the profit for the ‘owners’ and the managers too.

But the catch is that it does not work with cultures. Cultures are ecosystems: and the outcomes of an ecosystem are always an emergent effect arising not just from the sum of the elements themselves, but from the interactions between the elements of that ecosystem. So even if we could somehow program every one of those people to respond always in the exact same way, the overall outcome would still be somewhat unpredictable, always somewhat ‘out of control’. The only thing that ‘control’ does do well is suppress all creativity, innovation, difference – which, since these are themes that most organisations often need the most, makes a ‘control’-based approach to culture self-defeating even before it starts.

And it not only does not work for cultures, it doesn’t work all that well for software-development either. Hence the Agile Manifesto and its underlying human-oriented principles. If we approach software-development as an expression of human culture – rather than solely an attempt to define how to ‘control’ a machine, in a context that’s broader than just that one machine – then we do it in a very different way. Usually a much more successful way, too.

But what definitely does not work is doing it in a muddled, mixed-up halfway-house. Far too many so-called ‘Agile’ teams try to gain all the advantages of genuinely-agile development – rapid pace, iterative development, higher quality – but whilst still clinging on to a rigid top-down command-and-control culture. It doesn’t work: we end up with the disadvantages of both approaches, and the advantages of neither…

The same with cultural change. There are some parts to which tame-problems do apply, and do work. Yet even regulatory compliance won’t work if the culture doesn’t support it – as in a classic ‘pass-the-grenade‘ culture, for example. Hence, as Dave Gray put it, somewhat later in that Twitterstream:

- davegray: Culture is like a garden. It can be designed or grow wild but either way, it evolves organically @jamesarcher @13bitstring @AlexOsterwalder

And late towards the end of that Twitterstream – or perhaps just coincidentally anyway – Bard Papegaaij dropped in with three comments that are crucially important for culture-change:

- BardPapegaaij: First rule for dealing with complex systems: you are a part of, not apart from, the system you operate in. #Complexity

This is where culture-work will necessarily part company from Taylorism, because the latter is always about ‘doing’ change to others, or at others – rather than what is actually needed here, which is to accept being part of and embedded within the change itself. Yes, in all culture-change, we work with what facts we have: but often feelings are the most important facts that we face here – even though they’re not what most Taylorists would think of as ‘fact’…

(At this point it’s probably worthwhile remembering the old warning that “people do not resist change, they resist being changed” – especially if they perceive the change as being only for the benefit of others, at the expense of themselves.)

All of which is reaffirmed in Bard’s second note:

- BardPapegaaij: Second rule for dealing with complex systems: there’s no ‘outside perspective’ of the complex system you’re part of. #Complexity

This is why a cultural change only works when it’s modelled in action by leaders and others. If the self-styled ‘leaders’ demand that everyone else should change, but change nothing in the way that they themselves do things, in how they relate with others, and so on? – well, no, it ain’t gonna fly. People take note of what leaders do – not just what they say they’re going to do…

(Stafford Beer’s POSIWID is another useful reminder here: “The [effective] purpose of the system is [expressed in] what it does” – not just what people say it ‘should do’.)

And Bard’s third note brings us back to a key difference between tame-problems and wild-problems:

- BardPapegaaij: Third rule for dealing with complex systems: simplification doesn’t improve the system; it usually dramatically degrades it. #Complexity

For tame-problem elements in a context, Taylorist-type tactics probably do apply: simplify, simplify, simplify. But for wild-problem elements, as Bard warns, attempts at simplifying will almost invariably make things worse – not least because overly-simplistic ‘solutions’ so often slice off or elide over crucial nuances upon which success and viability would depend.

Which perhaps brings us to one last point, about anticlients – because the reality we face is that a flat-footed, over-controlling ‘culture-change’ risks creating not just vast numbers of anticlients, but vast numbers of anti-employees too. Perhaps take a look at the post ‘Anticlients are antibodies‘ for more information on that? – it’s not a risk that we could wisely ignore…

Leave it at that for now, anyway – over to you for comments and suchlike, if you wish?

Hi Tom,

A couple of observations.

Firstly, while James’s assertion does seem startling at first sight, perhaps he has a very organic model of software development in mind? I’m not sure I would always characterise software development as a tame problem.

Secondly, when I worked for a large organisation, it was indeed taken through a change programme which was methodically designed and built and which was quite successful to start with. A diverse team of consultants was involved and the people did change from being operationally focused to being customer focused. It involved firing a large proportion of the top management team, lots of communication and training and a new performance management system, all very professionally done. The good thing about it was that there was a lot of management engagement with people during the change, and for quite a while it worked very well….until a new Chief Executive was appointed and over time, it reverted back to some extent. It was very expensive and I don’t think was sufficiently well embedded in the organisation to survive a change of management.

I don’t think many people would advocate this type of approach today, but I guess it shouldn’t be discounted entirely.

Sally

On “perhaps he has a very organic model of software development in mind”, it didn’t seem so – or at least that’s how interpreted his “methodically designed, built, and tested almost like a software product” [my emphasis]. That’s what I (over?)-reacted to.

On “I’m not sure I would always characterise software development as a tame problem”, later on in the post above I made it very clear (I hope?) that Agile-done-right does not view software-development as a tame-problem. Classic Waterfall does; and most organisations’ attempts at ‘Agile’ in practice seem to be a horrible mess of sort-of-Agile-so-we-can-do-what-we-like with a command-and-control overlay, which gives the advantages of neither model and the disadvantages of both…. i.e. Not A Good Idea.

On “The good thing about it was that there was a lot of management engagement with people during the change” – yes, that’s the crucial bit, and…

On “.until a new Chief Executive was appointed and over time, it reverted back to some extent” – yes, that’s what kills it – particularly a senior executive who expects everyone else to change but won’t change their own behaviours in line with what they demand from everyone else.

On “It was very expensive” – yep. Which is why it’s kinda sad that in those kinds of cases it can take just one ‘senior idiot’ to blow everything that’s been gained through all of the previous work. Oh well.

On “I guess it shouldn’t be discounted entirely” – I never said it should. All I’m saying above is trying to use tame-problem tactics – in essence classic top-down command-and-control, ‘here-are-your-new-personal-values-by-which-you-shall-live-for-the-sole-benefit-of-the-stockholders’ etc – will not work for all of it. Some, usually yes; all of it, almost invariably no. That distinction is kinda important?

On your final point, Tom, I’d like to make it clear that the change was not enacted by “classic command and control”. Here are some quotes about it from a book written by one of the consultants:

“You don’t change culture by trying to change the culture”

“Power in the culture was not about command-and-control…it was about information and who had the most….therefore a primary focus of the change effort was behaviour change in the direction of openness, more trust in others, and greater teamwork…a re-ordering of values would then ensue”

50% of the new performance management system,introduced after a very extensive behaviour-based training programme was concerned with qualitative behavioural assessment, rather than quantitative achievement of goals. So it was all pretty good stuff really, and I’ve certainly benefited hugely from the whole experience of going through that.

Hi Tom,

My opinion is that any organic change of viable object follows the same pattern. Culture is based on shared beliefs and usually nobody argues that it relates to viable nature. Software nowadays very often is referred as software product. This includes those bits and bytes into human ecosystem. And that’s why we may consider (and they don’t hesitate to really do it) viable principles for software (product!) development.

So software development is a kind of social relations and a part of enterprise game. Having common basis for culture and software, why one can’t apply findings of software development to another domain, say culture development?

Moreover, I know the place in software development cycle culture changers should look into first. For digital era this exercise is quite easy. It is definitely about enterprise architecture frameworks and patterns, which start with business ideas and inspirations up to implementation governance.

Using your Enterprise Canvas notation and idea of maturity levels (as a software development stuff) one could construct pretty fine culture development framework, I think.

Regards,

Sergei T

Thanks, Siarhei. Probably the main thing that I’d want for people to take away from this post is that trying to treat culture-change like class top-down Waterfall is rarely a good idea; but conversely, treating software-development like culture-change probably is a good idea.

Which is where this links up with your note about: “So software development is a kind of social relations and a part of enterprise game. Having common basis for culture and software, why one can’t apply findings of software development to another domain, say culture development?” Once we do understand that software-development does necessarily include a significant cultural/wild-problem element, then in which case, yes, we can take the learnings from Agile (and from, say, Deming’s work before it), and apply it to cultural-change. But we need to make that paradigm-shift first – otherwise we fall back to trying to treat culture as an exercise in Taylorist-style ‘programming of the robots’, which does not go down well, in any industry.

On “It is definitely about enterprise architecture frameworks and patterns, which start with business ideas and inspirations up to implementation governance” – well, yes, I would hope so. The catch is that it’s just not there in any of the ‘mainstream’ so-called ‘EA’-frameworks at all – it’s not in TOGAF, or DoDAF/MoDAF, it’s in FEAF merely as blank placeholder, it’s not in industry specific standards such as eTOM/Frameworx, or SCOR (to my knowledge – I’ve only read SCOR in summary). It’s only the ‘crazy-guys’ like me or Doug McDavid or Nick Malik or Sally Bean or Ruth Malan or Simon Brown or Len Fehskens or… – well, there’s quite a few of us now, thankfully! – that seem to bother with the human dimension at all.

On “Using your Enterprise Canvas notation and idea of maturity levels (as a software development stuff) one could construct pretty fine culture development framework, I think” – well, yes, I’d hope so on that too. 🙂 For software-architecture itself, one person whose work I would strongly recommend on this is Simon Brown, CodingTheArchitecture.com: if your main concern is deeper into software-architecture, and less at the broader-enterprise scope, you’re probably better starting there than here. (Yes, I can and do ‘sell’ other people’s work in preference to my own, if I think it better fits the clients’ need! 🙂 )

Quick points:

I agree with Sally that SW development is not always “tame”, and often fraught with mismatches with working cultures.

I wonder if the point came up that any sizable organization is an amalgam of multiple cultures (the engineers, the sales-people, the board room …) and often the dynamic tension involved is very healthy.

I also wonder, Tom, if you’d mind pointing us to where you explain that Simple and Straightforward quadrant of SCAN falls in the “wild-problems” domain”

Thanks, Doug. And yes, on “any sizable organization is an amalgam of multiple cultures”, you’re right, I don’t think it’s mentioned directly, and yes, that in itself is one class of wild-problem elements for a culture-change. Each sub-culture has its own story, its own language, its own expectations; each is a ‘system’ and ecosystem in its own right; hence the whole-enterprise is a system-of-systems, an ecosystem-of-ecosystems. The tension is healthy when those differences are acknowledged and respected – but a common source of under-the-counter explosions when one sub-culture tries to ride rough-shod over all of the others….

On “I also wonder, Tom, if you’d mind pointing us to where you explain that Simple and Straightforward quadrant of SCAN falls in the ‘wild-problems’ domain” – okay, this’ll take a short while to explain…

There’s some on this in the post ‘Selling EA – 3: What and how‘, but this probably needs a bit more detail.

In the earlier diagram above, with the tactics-keywords, you’ll see the default or generic position of the ‘boundary of effective-certainty’, which assumes that that boundary is in the same ‘position’ regardless of whatever’s happening on the vertical-axis, the difference between ‘plan’ (distant from ‘NOW!’) and ‘action’ (close to ‘NOW!’). In other words, it’s just a straight line. And the resultant mapping onto the context gives us what are four sort-of-equal-sized domains – which makes the labels on the backplane easy to read, and so on.

To be honest, it’s a bit of a fudge – somewhat of an over-simplification to make it easier to read.

In the real world, there are all kinds of distortions going on – and that’s what we can see in the second diagram, showing that ‘skew’, where the boundary-line is not straight up-and-down, but skewed way over to the left-hand side in the lower ‘rule-based action’ domain, aka ‘Simple and Straightforward’. What I’m trying to say with that particular crossmap is that what looks like a predictable tame-problem at the planning-level (i.e. ‘above the line’, up into the Complicated domain) actually turns out to be a lot less predictable when we get into the real-world action. In effect, the ‘Simple and Straightforward domain’ in that second diagram – those aspects of the context that are amenable to tame-problem tactics in real-time action – is represented by the tiny little scrunched-up bit of blue to the left of red boundary-line. But I’ve left the backplane as it was, if greyed-out here – otherwise the caption would be unreadable, because the ‘Simple’ domain-caption should be only within or behind that tiny bit of blue.

But yeah, you’re right, it’s a bit of a kludge: I probably ought to write a separate blog-post to explain this one specific point somewhen. (When sanity Permits, or something like that?) But thanks for kicking me to explain it, though – it does need a better explanation than it’s had so far. Just because I know what the skew is ‘supposed to mean’ doesn’t necessarily mean that anyone else understands what it’s supposed to mean – and yeah, I do need to be more careful about that point, don’t I? Apologies, anyway.

A lot of violent agreement that using sw development is probably not a great analogy and, as I tweeted to James, there are better.

Perhaps I lack imagination or perhaps it’s the late hour, but I cannot think of any so my brain reverts to the chain-linked fence that is my default analogy for any complex system.

What would your analogy be?

Richard Kernick asks:

“What would your analogy be?”

Two immediate observations:

First, this presupposes that an appropriate analogy exists, and that the appropriate way to approach the problem of cultural change is by analogy.

Second, apropos of the first observation, I have become tiresomely repetitive of something Huston Smith said in a course on Eastern religions I took a long time ago – “an analogy is like a bucket of water with a hole in it; you can only carry it so far”.

Now the ramble begins…

We as architects have a fatal attraction for models, and analogies are a sort of model. The idea behind models is that if a model of a system and that system are enough alike, things that are true of the model will likely be true of the system. The problem is that models are, by design, significant simplifications of the systems they are models of; otherwise we would just build the system, rather than build a model of it. I use the word significant deliberately, for its two senses – its quantitative sense, and its qualitative sense. The quantitative sense derives from the fact that what makes a model useful is the large amount of stuff we leave out of it. The qualitative sense derives from the fact that the stuff we leave out had better be “insignificant” in the sense that it doesn’t materially affect the properties and behaviors we are interested in using the model to understand, and the stuff we leave in had better be significant, i.e., essential (necessary and sufficient), to the properties and behaviors we are interested in using the model to understand.

The thing that makes culture a “wild problem” as Tom says is that culture is a manifestation of the group behavior of individual people, and as individuals, people are nondeterministic and autonomous. They are, as such, extremely difficult to model.

And while we can certainly “design” a set of desired properties and behaviors of a group of individuals, it isn’t the designing that’s ultimately the problem, though it is a difficult problem; it’s the implementation and operation of the artifact the design is of that is the challenge. It’s only when we make and use something that we can get any real sense of whether our design for the thing is “good”, or at least reflective of our intentions.

Lately I have been harping on the idea that we can’t divorce “designing” from “making” and “using”; we design things so we can make them and we make things so we can use them, and the making and using of designed artifacts are themselves artifacts that can (indeed, should if not must) be designed.

So, we can certainly design things that involve people, to the extent that we think we understand how people “work”, as expressed by the implicit and explicit models we have for their properties and behaviors. Let’s assume for the moment that we can in fact “design” a culture (and further, design its “making” and “using”) such that if it were “correctly” (i.e., in accordance with these designs) implemented and operated we would get the desired outcomes, though I concede that our ability to create such designs may be illusory. It’s the implement and operate part that is the real challenge.

In comparison to people, software is an extraordinarily tractable medium. Insightful observers have long argued that this is the pitfall of software development – the apparent tractability of the medium leads us to believe that we can easily change it however we see fit. Indeed, the entire agile movement is founded on this idea. And while the relationships between the elements of a software artifact may intrude, at least the elements themselves, unlike people, are comparatively deterministic and non-autonomous.

Alexander Pope wrote “The proper study of mankind is man” (in An Essay on Man: Epistle II, not to be confused with Isaiah Berlin’s book, The Proper Study of Mankind), and there are two very substantial disciplines addressing the behavior of people as individuals and in groups – psychology and sociology. The search for an analogy on which to base an approach to cultural change founders on the fact that there are no disciplines analogous to psychology and sociology from which we could derive insights that we could apply to culture, other than psychology and sociology themselves.

len.

Len, long way to say you can’t think of one either 😉 .

You’re right, of course, models and analogies only get you so far. That doesn’t means, and I don’t think you are saying, they don’t have their place. They tell stories and people relate to stories, allows the receiver to relate a known paradigm to an unknown.

Having said to James that there are better analogies I thought it only right to back that up with an example. As I haven’t come up with one and nor has anyone else, perhaps this is a case where no useful analogy exists.

On the other hand if I were to talk about culture change to a bunch of developers with little experience away from the keyboard I might use this analogy. Models, analogies and stories must be looked at in context of the audience.

Richard Kernick replies to me, somewhat, I think, in jest:

“Len, long way to say you can’t think of one either.”

No, I’m saying I’m not going to try to think of one, because, first, I don’t think this is the right way to approach the problem, and, second, I don’t think a useful one exists.

Richard continues:

“perhaps this is a case where no useful analogy exists”

And that is exactly what I was making a case for.

Richard continues:

“I don’t think you are saying, they don’t have their place”

Correct, I’m not saying that. I’m saying we have to be very careful about how we use models and analogies. I have done a great deal of modeling in other disciplines (the flight of small rockets, the three dimensional virtual modeling of protein molecules, and the emulation of computer systems as a means of developing real time process control software in the absence of timely availability of the actual hardware), and in these cases we rigorously tested our models against the behavior of real systems before we released them for general use. Rarely have I seen anyone in the enterprise architecture community test their models for their suitability to the uses to which they will be put. I’ll willingly concede that some of that inevitably “goes with the turf”, but again, that’s something that makes working in this space so uniquely challenging.

Finally, Richard writes:

“Models, analogies and stories must be looked at in context of the audience.”

Yes, exactly, and I think we could be more explicit about doing so – to use a phrase that I am inordinately fond of, we should be able to offer a convincing rationale for why we believe they are “fit for purpose”.

len.

Thanks for your reply, Tom, and certainly no apologies needed on my account! I just happen to like pictures of concepts, and love to try to understand them.

Back to the culture issue, I should probably be a bit clearer about the thought that certain dynamic tensions are positive, even though uncomfortable for the participants. I’m thinking, for instance, of the tension between the culture of lawyers and the culture of R&D, where a balance of risk-promotion and risk-aversion is generally healthy, and where a key issue is where is the right balance point? Or, how about the culture of sales, that offers all kinds of wild options, assuming “If we can sell it, the engineers can build it” whilst the engineering culture abhors the promise of something not proven to actually work. Or, the Stafford Beer tension between System Three with its focus on the day-to-day, and making the numbers, and System Four, with its eye on the future and the wider market. Adding System Five, we have the System 3-4-5 homeostat, which (the way I see it) has fundamental cultural aspects that are healthy when in balance, and actually pathological when one is weak, atrophied, or missing.