On sensemaking in enterprise-architecture [4]

How do we make sense of uniqueness in enterprise-architecture? How do we support decisions at ‘business-speed’ – especially when the context is in part unique? And what architectural support do we need to provide for sensemaking and decision-making at business-speed?

In the first part of this series we looked briefly at uniqueness, and why it’s a crucial if often-forgotten aspect of enterprise-architecture.

In the second part we explored how sensemaking and decision-making change when time-available is squeezed down to business-speed, using the real-life case of US Airways Flight 1549 as a worked-example.

In the third part we reviewed some of the tactics used for sensemaking at ‘business-speed’, and why it differs from conventional ‘considered’ sensemaking.

In this final post for the series, we’ll look at how we can balance all the different sensemaking techniques in real-world business-practice, and the architectures that we’d need in place to support this.

Maintaining the balance in real-time

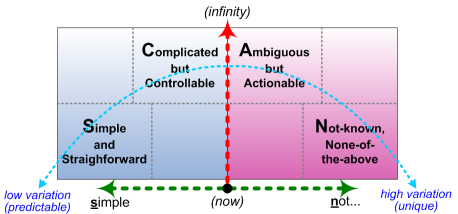

What came up from the previous post in this series – and perhaps even more from the post ‘Domains and dimensions in SCAN‘ – is that we have three distinct dimensions we’re dealing with in sensemaking:

- modality – the extent of perceived ‘controllability’ versus ‘possibility and necessity’

- available-time – the amount of time remaining before an action-decision must be made

- repeatability – ability to reliably recreate the same perceived results

At the highest speed, just about all we’ll have time to see is the modality – in particular, whether it’s Simple (a true/false choice) or Not-simple (broader range of ‘possibility and necessity’):

As we saw in the third post in the series, we have known-tactics to work on either side of that split. The crucial concern is to use the appropriate tactics – which means we need to know which side of that Simple/Not-simple boundary we’re on.

The reason why this is important is that we also need to balance the variety in the system with reliability and repeatability of results. A Simple technique such as a predefined work-instruction allows for only a small amount of variety in the system – which is fine if there is only minor variety in the system. But if the variety, or uncertainty, is higher than the technique allows, we’re going to be in trouble – but given the limited perspectives of the technique, we may not even know it until too late, let alone how or why it failed. Conversely, using a Not-simple technique in a low-variety context is not only overkill – too many options! – but is probably less reliable as well. To summarise:

- a Simple technique would give the most repeatable results in a low-variety context

- a Simple technique is unreliable in a high-variety context

- a Not-simple technique is overkill (‘too complicated! too ambiguous! too uncontrollable!’) in a low-variety context

- a Not-simple technique is the only way to gain repeatability in a high-variety context

Another key trade-off here relates to skills and experience: any Not-simple technique is going to rely on skills and experience, whereas on the Simple side it usually won’t. (In fact that’s one of the reasons why it’s Simple: anyone or anything can do it, as long as they follow the same Simple rules.) We gain the best repeatability and reliability at the lowest effective cost by achieving and maintaining the right balance between Simple and Not-simple.

Yet in many real-world systems, or perhaps most, the effective-variety itself may change – sometimes even on a moment-by-moment basis. Which means that to gain that required repeatability and reliability, we need to be able to ‘move’ back-and-forth across that spectrum of modality, in line with the changes in variety. This can be tricky… – and especially so in a bureaucratic command-and-control context where everything ‘should’ be predictably Simple, but isn’t…

This is actually the role of sensemaking: identifying the variety in the context, and the nature of that variety. Once we’ve ‘made sense’ of what’s going on, we can then decide what to do about it – and do it. In most present-day business contexts, we need to be able to do this at ‘business-speed’ – in other words, fast. As a quick summary:

- the moment we need to ‘make sense’ of something, then by definition we’ve moved over to the Not-simple side of the scale

- we gain the best reliability and speed by pushing it back over to the Simple side

- we maintain the best flexibility – but perhaps at a cost in required skill-level and ‘understandability’ – by holding it on the Not-simple side

(Note too that in skilled-work ‘Simple’ is often subjective, not objective: it’s experienced as ‘Simple’ by that individual – such as via ‘body-learning’ from long practice – but not necessarily ‘anyone can do it’ in the usual sense of “follow these step-by-step instructions in the manual”.)

Adding time to the sensemaking equation

All of the above is what happens at business-speed. Yet in the same way that contextual-variety can change, so too can the amount of time-available before we have to act – in other words, the available time for sensemaking itself. Given more time, we can bring more ‘considered’ sensemaking-techniques into the mix – particularly the various flavours of ‘systems-theory’ and the like.

For SCAN, it’s a bit like stretching a roller-blind outward from the ‘now’ to give us a bigger picture of our available sensemaking-options:

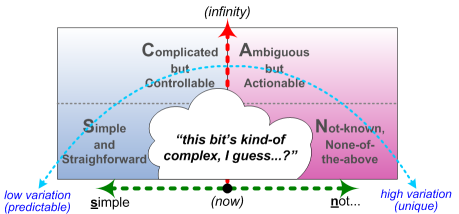

Stretching outward in time also highlights important nuances between Simple and Not-simple – a gap that the ‘considered’-techniques would seem to ideally suited to fill. This is where the word ‘complex’ tends to come into the picture…

Managing complexity is, uh, complex… 🙂 – but the real key is probably around variety. For example, on the ‘organised complexity’ side of the scale, Roger Sessions‘ technique Simple Iterative Partitions aims to reduce (or, ideally, remove) complexity in IT-‘solutions’ for a context – in effect, identifying what is or is not in the Complicated ‘domain’, and assessing and clarifying the factors that could bring it down into a real-time Simple ‘solution’. On the ‘disorganised complexity’ side of the scale, the various forms of complexity-theory and suchlike will aim to bring the higher volatility, uncertainty, complexity and ambiguity down into patterns and leverage-points and the like that would be usable on the Not-simple side of the story – preferably in way that would be experienced as ‘simple’ by the agents for that action.

Yet all of those ‘considered’ sensemaking-techniques take time to execute – and we forget that fact at our peril. Available-time has a nasty habit of collapsing to nothing – and if we’re in the middle of a ‘considered’ sensemaking-process when that happens, we can find ourselves with some seriously-scrambled notions of what makes sense and what doesn’t, and a serious misalignment between context-variety and what we think of as ‘simple’ in that context. That’s where things can get messy…

And that’s where we also discover that, yes, a little knowledge can indeed be more dangerous than no apparent knowledge at all – not least because it can slow things down to the point where they really don’t work.

[A classic example of this in juggling is trying to think too much about what we’re doing whilst we’re doing it… gravity won’t wait while we try to think out our every move!]

Doing the ‘wrong’ thing at real-time is often okay if we’re running fast enough to recover – especially if we design for ‘safe-fail’ or ‘graceful failure’ – and if we have some solid idea of our intended outcome. And we can often get away with a Simple decision-structure that tries to calculate and compensate for every Complicated factor, as long as the cycle-time is fast enough to do this at or near-real-time – as it can be in many IT-based ‘solutions’ for the organised-complexity side of the scale. But I’ve learnt the hard way to be very wary of certain forms of complexity-theory, because they’re not usable at ‘business-speed’: they shy away from ‘Chaos’, deride the Simple, and insist that we should only work with their concept of the Complex – a ‘solution’ which requires time that we rarely have available to us in real-world practice.

[One well-known example asserts that the only relationships between the Simple and a real-time Not-simple are either a collapse into chaos, or a rigid ‘take control’ – neither of which actually make much sense in practice. It also indicates that the only reason to be in the Not-simple – and to leave it, as quickly as possible – is to garner information to assess at relative leisure in tools for the Ambiguous ‘domain’: we may bounce back-and-forth between those two ‘domains’, but the focus is always away from the real-time.

In effect, that structure explicitly leaves us with almost no means whatsoever to deal with real-world variation of variety in a real-time context – which is, however, where almost all real-world sensemaking and skill-based work takes place. Not helpful…]

In practice, we would use time-variation – or rather, the easing-off of time-pressure – to move back-and-forth between a ‘considered’ sensemaking-framework to gain greater understanding of how to work with complexity, and the real-time Simple/Not-simple balance to take action at business-speed. This is what all ‘learning-loop’ processes aim to do, such as PDCA, OODA or the various Agile development-methods. But when a ‘considered’-type framework purports to resolve ambiguous-complexity at business-speed, watch out – because it doesn’t work.

Architecture for sensemaking

So as enterprise-architects and the like, what can we usefully do about all of this? Some practical suggestions:

— Identify the types of sensemaking and decision-making required in each context. Document these in the architecture-models.

[Where appropriate, note also the skills and skill-levels required for the respective sensemaking and decision-making – see later with regard to skills.]

— For inherently-Simple methods such as employed in machines, most IT-systems and rule-bound (‘regulated’) human processes, identify the limits of variety covered by those systems and social structures. If – as is often the case – the real-world context may at times contain a higher range of variety, we must identify, design, develop and test methods to identify when those limits may be breached, and Not-simple processes that can take over as soon as those limits are breached.

[This is a typical concern for business-continuity / disaster-recovery planning, recovery from security-breach, or recovery from social ‘fail’ such as public-relations or anti-client problems, ethics breach, lawsuit or supply-chain failure.]

— Identify the ‘business-speed’ cycle-time for sensemaking – in other words, establish just much time is available before action-decisions must be made, and hence how much ‘considered’ sensemaking-techniques can be used in customer-contact processes and the like. Very tight cycle-times with significant amount of Not-simple sensemaking will need training in learning-loops such as real-time OODA; longer cycle-times; longer or more variable cycle-times can use more mainstream learning-loops such as PDCA, typically supported up by After Action Review and the like – but again, training will be required.

[Note that learning-loops will typically also need information-system support of various kinds, including availability of ‘lessons-learned’ wikis and similar tools to support real-time sensemaking.]

— Identify options to bring the Not-simple towards the Simple. More specifically, identify processes where ‘lessons learned’ and other idea-gathering in the Not-simple ‘domain’ can be brought into the Ambiguous as patterns; where appropriate Ambiguous emergent patterns can be brought into the Complicated ‘domain’ and potentially be distilled into algorithms; and where Complicated algorithms can be reduced to Simple rules and work-instructions. In the sciences, this sequences is classically described as ‘idea to hypothesis to theory to law’.

[Note that only some items can be moved from one ‘domain’ to the next in this sequence. Some Not-simple items will always remain inherently unique and uncertain, even though a pattern can be identified for it – such as the fission-point for a single atom in radioactive decay, relative to the statistical half-life for atoms of that type. Some Ambiguous items will always remain inherently uncertain – such as many social processes, or iterative processes where the result of one loop acts as a cause for the next. And some Complicated algorithms – especially those with longer delay-loops – cannot be reduced to Simple real-time rules. The point here is to do what we can to move things towards the Simple – and to acknowledge those items that can’t be moved on any further. More on this in another post, anyway.]

— Identify processes, or parts of processes, which rely on skill and experience – which by definition will place some or all of their activity on the Not-simple side of the scale. (Conversely, anything on the Not-simple side of the scale is likely to require skills and experience.) Identify the respective skills and required skill-levels in each context. Document and design for these within the architecture.

[In the terms of the service-content model used in Enterprise Canvas, these are usually classed as capabilities. Wherever appropriate and practicable, these should be documented and tracked as such within the architecture-models.]

— For all identified skills, identify the processes by which these skills will be developed, either by outside organisations, or preferably in part via learning-loops in live business-processes. Again, document and design for these within the overall enterprise-architecture.

[Note that key parts of skills-development can only take place when time-pressure is slackened-off enough to do ‘considered’-sensemaking on personal aspects of the skill over significant periods of time – typically over months or years. There is a huge kurtosis-risk for any organisation that maintains time-pressure such that it does not allow sufficient time for that type of skills-development or skills-transfer to take place. Again, I’ll explore this in another post soon, but for now, see the Sidewise posts ‘10, 100, 1000, 10000‘ and ‘Where have all the good skills gone?‘.]

— Identify the ‘practice-spaces’ required for skills development, typically either ‘safe-fail’ or (usually for higher-level skills) ‘brown-out’ or ‘graceful failure’. Describe, document and design for these in the overall architecture.

[This will typically include simulation and real-time ‘games’, and explicit practices for observation and reflection, and for skills-transfer from more experienced practitioners. This should also include specific social-structures to support skills-learning, such as the explicit ‘no-blame’ rules that underpin the US Army’s usage of After Action Reviews.]

It may also be interesting – and potentially important – to explore Mask-effects or options within the organisation and its context, and structural support for the ‘Don’t Panic!‘ theme, as both summarised in the ‘Not-so-simple’ section of the third post in this series.

Anyway, more than enough for now, I think? Over to you if you wish: comments or suggestions, anyone?

Leave a Reply