More on the ‘Why’ for new toolsets

As you may have noticed, I’ve been kinda struggling somewhat to fully explain the ‘Why’ behind all of this talk of ‘new toolsets for enterprise-architecture‘ and related disciplines. And then, out of the blue, via a reTweet from Phil Beauvoir of ‘Archi‘ fame, comes this:

Coding is not the fundamental skill;

modelling is the new literacy.

Yes, exactly. Exactly.

Okay, it’s from a guy I don’t know, called Chris Granger, in a current blog-post, ‘Coding is not the new literacy‘, about a somewhat different topic, namely the over-hyping that we see so much at present about getting schoolkids to write computer-code.

But he’s absolutely right: coding itself is not the problem. Coding isn’t hard (relatively-speaking, anyway): anyone who can write and pay attention to instructions can write code without much difficulty. As Chris Granger says, “coding, like writing, is a mechanical act”.

It’s knowing what to code that’s the real problem. Coding isn’t hard; but making sense of a context, and deciding how to describe it in a form that can be coded? – yeah, that is hard…

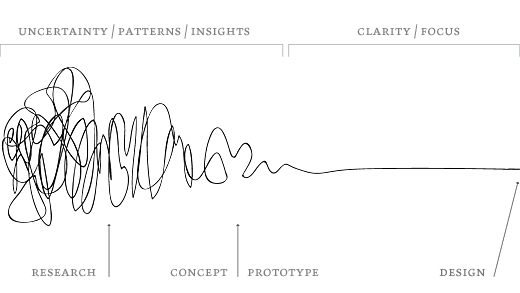

Which is where the Squiggle comes into the picture – that depiction of the overall development-process, from first fleeting ideas to nominally-‘finished’ product:

Which is where modelling comes into the picture – along the full length of the Squiggle.

Which is where toolsets to help describe the outcome of that modelling would come into the picture.

Which is where toolsets that can cover the whole of the Squiggle – and not merely disjoint fragments of the Squiggle – would come into the picture.

Hence that long stream of posts over the past few weeks.

Ultimately, though, the toolset aspects of this are relatively straightforward. We just need to buckle down and do it, that’s all: apply enterprise-architecture to our own work.

But modelling in itself? – ah, there’s a real problem there…

A catch.

A glitch.

A problem that’s right at the root of the literal ‘architecture of the enterprise’, at just about every possible scale.

Yet a problem so huge that it seems most people dare not look at it at all…

We could summarise the core of the problem in just two lines:

— modelling a context requires us to ask questions about the context – every possible question that might usefully apply in that context

— but asking questions is often considered unacceptable in the extreme – in particular, asking any questions that might cast doubt on the certainties, structures, politics and ‘rights of authority’ in enterprises of any form

Those two requirements don’t mix well at all…

Hence, on the one side, we have the real-world need, such as described in Maria Popova’s post ‘The Psychology of Why Creative Work Hinges on Memory and Connecting the Unrelated‘.

And on the other side, we have the painful reality, as described, for example, by David Edwards in his Wired post ‘American Schools Are Training Kids for a World That Doesn’t Exist‘.

If we’re honest about it, the so-called ‘education’-system of the present day isn’t really much about education at all: it’s about training, about compliance and conformance to the expectations and demands of society in general – or, to be even more blunt about it, to the expectations of a society that largely no longer exists, of supposedly-guaranteed jobs, strict segregation of socioeconomic classes, certainty, control, control, control. Which would perhaps be fine, if that society’s assumptions matched up to the real-world – which they don’t. Not any more.

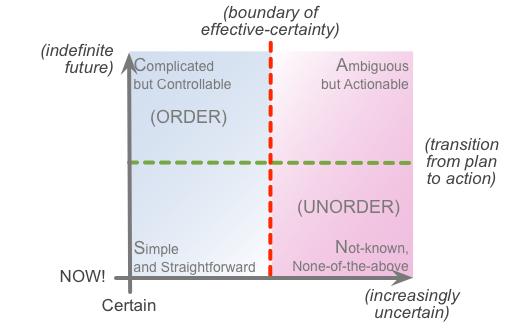

There’s plenty of politics around this, of course – such as Ivan Illich’s ‘Deschooling Society‘, or Postman and Weingartner’s ‘Teaching As A Subversive Activity‘, to give two now quite-old examples. If possible, though, I’d prefer to sidestep the politics, and look at the context from a SCAN perspective – and perhaps particularly the crucial distinction between order and unorder:

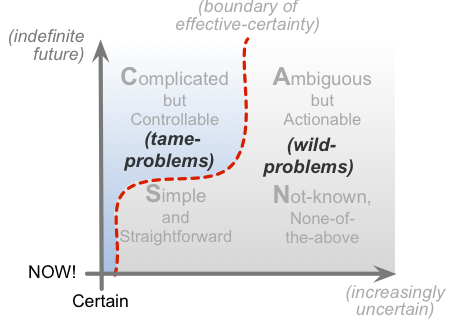

At present, mainstream school-education, from around 8-16yrs, is almost exclusively ‘order’-based, focussed around tests, true/false answers, quantification, exact like-for-like comparisons – repeating rote-fashion what someone else has taught as ‘the truth’ or ‘the facts’, with little to no room for variation or individuality. (As an old phrase puts it, “originality will get you either A or F, with nothing in between”.) In other words, tame-problems:

Tame-problems are (relatively) easy to teach, and to test: the problem has already been solved, and, importantly, stays solved, such that everyone should get the same answer every time. Most relevant for this context here is that no modelling is required – it’s already been done by someone else in the past, and (in theory, at least) won’t change in the future.

But the catch, as per the diagram above, is that tame-problems are only ever a subset of any context – for the most part, a subset that relates only to a somewhat-imaginary order-based view of the world (“lies-for-children“, as Cohen and Stewart put it in The Science of Discworld), and that, even in the more ordered parts of the context, doesn’t work as well in practice as it seems to do in theory. The rest of the context consists of wild-problems – unordered ‘problems-in-the-wild’ – which don’t necessarily conform to expectations, for which there is often no ‘final correct answer’, and are often too unique to compare with anything else anyway. For those wild-problems, we do need to be able to model them – because it’s the only way we’d have any chance to get the outcomes we need.

Classically, a school-type approach – or any training, really – will focus on method. Which, yes, does work well with tame-problems, or anything well over to the left-hand ‘order’-oriented side of the SCAN frame. But as soon as we have to work with wild-problems, anything values-based, anything with any form of unorder-complexity or uniqueness, then methods alone are not enough: we need to go back one key step, to understand from where methods actually arise.

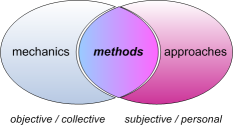

Particularly for anything involving any real skill, each person necessarily arrives at their own distinct methods, derived in part from the ‘objective’ aspects of the context – the mechanics – and the ‘subjective’ aspects – their own individual approaches to the context, derived from individual difference in body, perception, history, gender, socialisation and much, much more. Hence in practice, method arises at the intersection between mechanics and approaches – which we could summarise visually as follows:

By contrast, school-‘education’ all but ignores the subjective side of this story: every student is, in essence, assumed to be the same. The trade-off is that if we do this, we can test and grade them as if they are the same – but in the process, we actively shut down the students’ internal processes for individual skill, individual creativity and (as per this context) the ability to make sense of and respond appropriately to anything different, anything ‘new’. All of which are very much needed as soon as students step out from the cloistered confines of school and out into the real-world: and especially so in a world that is changing fast, in almost every possible way – as is certainly the case right now.

Which brings us back to where we started, with Chris Granger’s comment that:

Coding is not the fundamental skill;

modelling is the new literacy.

It should be clear why there’d so much of an ‘education’ focus on “coding is the new literacy”: it’s something that can easily be taught within the existing school-system, without requiring any real changes at all – it’s just another predefined ‘subject’ to add to the existing predefined ‘curriculum’, really. Yet ‘coding is the new literacy’ contributes almost nothing to help with the real problem, and real need – the literacy of modelling, of learning how to make one’s own sense of the world, in order to act appropriately and ‘on purpose’ within it.

Which brings us back to the Squiggle, and the need for tools that help ‘join the dots’ across the whole of the Squiggle – because without that support and whole-of-context continuity, learning how to model and make sense of the world becomes much, much harder than it would otherwise naturally be.

A lot more we’d need to explore on this: but perhaps that phrase “modelling is the new literacy” would be a good place to start.

—

Bonus link: see the video of Bret Victor’s talk ‘Inventing On Principle‘ – just under an hour, but a real eye-opener all the way though, especially from a toolsets-perspective. (Hat-tip to Chris Granger, Rob Attorri and the Light Table blog for the link.)

My first thought & personal experience is that there is IMHO not much difference between coding/modelling/mapmaking, so:

Not so strange because the three fields are more and more intertwined (e.g. GIS/mapping libraries & tools, parametric modelling, LEGO® MINDSTORMS® EV3, generative art, games with level/world editors…)

Agreed in general, Peter – it’s just that from what I’ve seen of most ‘xxx-literacy’ programmes at school-level, they just focus on coding as a mechanical act, and keep it simple enough to be able to apply something close to a crude true/false exam/test at the end of it. Little to no modelling or mapmaking at all – and certainly no mention of the ‘meta-‘ layer, without which modelling and/or mapmaking are going to be very seriously constrained.

There are people who push to go beyond that, of course – the folks behind the Raspberry Pi being one of the more visible examples these days. Yet even there it’s kinda telling that although the Pi was originally intended as a tool for schools to use, the main take-up (in the millions) was by hobbyists wanting an IoT-capable device that was simpler and cheaper and a bit more ‘mainstream’ than an Arduino. It’s only now – a couple of years later? – that the Pi is starting to make some real headway in schools. Illustrates the problem quite well, I think?

I made my remark because I thought the sentence I quoted defined the real difficulty of coding/modelling/mapmaking so well 🙂

I can’t react on the situation at schools in general. I’ve three kids between 8-14 and I’m quite happy with how the school systems work. I deliberately say school systems because there is a huge difference between different schools and between teachers. And in the Netherlands parents can have a lot of influence on the level of the (local) school system, and they have a certain amount of freedom to choose between schools who implement the rules set by the government differently. And schools have a certain amount of freedom to offer different kind of classes to distinct themselves (e.g. extra art, languages like Chinese, sports, science…).

So the coding on (Dutch) schools thing is something that can be implemented in many ways, one of which is https://www.robomindacademy.com/go/robomind/home which is not too bad I think and what my son would love to do 🙂

Hi Tom

I can’t disagree with you about the basic problem with education and, not so much teaching coding but teaching it as a mechanical art is very typical of that problem. I do, however, have a problem with the statements “coding, like writing, is a mechanical act” and “anyone who can write and pay attention to instructions can write code”. Two reasons for that.

The second statement (which is yours) suggests a view of what coding is about that has little to do with the work that programmers do today – or in fact for quite some time now. Coding following instructions belongs to a world where people wrote COBOL using coding sheets. These days coding and modeling are intertwined – not always well but to the extent that discoveries are made during coding, which change the model. To me this is a necessary consequence of SCAN – where coding belongs in the C or A quadrants.

The first statement not merely regards coding as mechanical but writing too apparently. Now, if “writing” applies only to the physical act of writing, that might hold. But that’s rather artificial. How many people (apart from copy typists, if they still exist) write following a set of instructions? It’s a creative act, which can be wholly improvisational or in which a pre-existing conceptual model is not merely built but continually changed and refined as the real thing gradually replaces the model.

Perhaps that’s not what you meant to say. It’s what I read.

cheers

Stuart

Thanks, Stuart – and yeah, it’s not quite what I meant, though that’s my fault, of course, not yours… :wry-grin:

Part of my reply to Peter above would also apply here: that in practice schools are tending to treat coding as a ‘mechanical act’ – barely one step above basic keyboard-usage. The modelling/coding/testing loop is something that tends to happen outside of a mainstream school-type context – especially wherever a ‘xx-literacy’ phrasing is used, because its usage immediately implies that someone is going to test and grade and compare in nominally like-for-like terms, which almost by definition forces it into ‘mechanical act’ rote-learning (in SCAN terms, extreme left of S/C) rather than sense/model (more toward A/N in SCAN).

By the way, in SCAN terms, I wouldn’t necessarily say that “coding belongs to the C or A quadrants” – in fact, as above, the ‘mechanical act’ of coding in terms of a predefined grammar (as per virtually all the programming-languages I know) is more in the S/C domains (S as the actual ‘mechanical act’, C as the compliance to the grammar). The full modelling/coding/testing loop bounces around the entirety of the frame, of course, in a fully-fractal way. Some of the ‘coding’ part, in your sense, loops back and forth across the C/A boundary, as you say, but particularly in terms of themes such as translating patterns etc (A) into syntax-compatible structure (C). We’ll also see frequent dives into N, either via the A/N boundary (‘edge of innovation’) to explore a new idea, or across the S/N boundary (‘edge of panic’) when the code doesn’t do what we expect!

Hope that makes a bit more sense this time? Thanks again, anyway.

Tom, thanks so much for Bret Victor pointer. I’m going to be spreading that widely, as well. Here’s the thing, for me. The principle I’ve been driven by for many years has been the principle that organizations are life-forms composed of life-forms, and that much of what we do via technology ends up torturing both the organizational and biological life-forms involved. We have to stop that! We have to fight against the violations of this principle! Stop torturing enterprises and their people! That video finally helped me realize the fact that I have been flailing around for a long time trying to blurt this out. So thanks!

Hi Doug – delighted to hear you say that the Bret Victor piece had as much impact on you as it did on me! 🙂

More on what it means to me some other time, perhaps – but again, am glad to see you found it useful. Thanks again!