Intimations of arrogance? – an addendum

Carrying on from the previous post, with a bit of an explanation about why I’m becoming so much of ‘a grumpy old guy’…

Here’s the blunt fact: I’m not a good thinker.

I know that.

Too many gaps in my knowledge, for a start.

Yet whilst I know I’m not a good thinker, it’s always a bit of a shock to discover that others can be even worse at it than I am. And all too often are.

I never know quite what best to do about that.

I know I’m not good at thinking – so I’m almost fanatical about finding ways to do it at least some bit better, any way that I can.

Often these ways can be surprisingly straightforward, such as simple tests or checklists.

Essential tactics that, to my horror, other people often seem to outright ignore. More than ignore, at times. More like actively suppress.

Ouch.

Not A Good Idea…

If it sounds arrogant of me to say that, so be it.

It happens, unfortunately, to be the truth.

Perhaps particularly so in fields such as enterprise-architecture and large-scale change, where clear thinking over the entire scope is an absolute must, and yet is often evident only by its outright absence.

Ouch indeed…

Let’s take as an example one of my perennial bugbears about so much of so-called ‘enterprise’ architecture.

Most people in the field still seem to regard ‘organisation’ and ‘enterprise’ as synonyms, which itself is actually yet another example of Not A Good Idea.

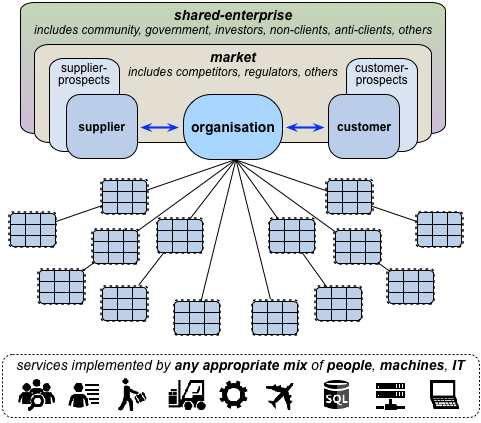

Instead, consider the idea that ‘the enterprise’ is something that ‘the organisation’ is in – a story-space of literal ‘enterprising’, one that encompasses not just the organisation, but its suppliers and customers, its competitors, its market, its regulators and much much more. Everyone each playing their own part within the same shared-story.

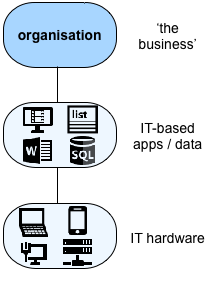

In which case, for any given organisation’s ‘the architecture of the enterprise’, the enterprise in scope is a complex web of people, needs, desires, hopes, fears, goals and more, linked together by relationships and services within and beyond that organisation itself – services that could, in theory at least, be implemented by any appropriate combination of people, machines and IT:

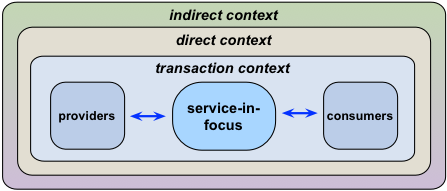

In effect, this is a pattern that we can re-use not just at the level of a single organisation, but at any level, ‘larger’ or ‘smaller’, for any scope, any scale, any content, any context. Pick on anything as the current ‘service-in-focus’, and apply the pattern to identify the scope of the respective ‘the enterprise’ that we need to address, relative to that service:

It’s not fixed, static, locked to a single type of description: it’s a pattern that we can use to help us make sense of just about anything, anywhere.

We can apply the same pattern to the organisation as ‘service-in-focus’, somewhat as earlier above:

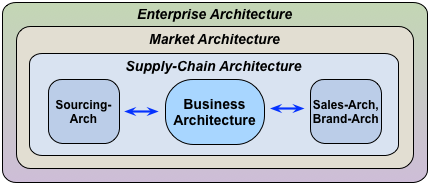

We can apply the same pattern ‘upward’, with a bit of expansion, to describe an overall market within which that organisation operates, and thus some of the relationship that connect the players in that space:

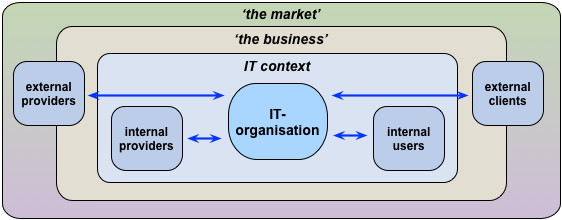

With some similar tweaks, we can apply the same pattern ‘downward’, to a subsidiary part of the organisation, such as the IT-unit:

And we can apply the same pattern further ‘downward’ again, such as to focus on the surrounding enterprise for an organisation’s physical IT-infrastructure:

It’s a pattern, right?

A meaningful, useful summary for the scope for a literal ‘the architecture of the enterprise’, whatever that respective ‘the enterprise’ might be?

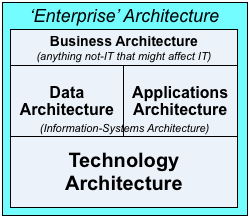

Well, for comparison, here’s what just about every darn framework for so-called ‘enterprise’-architecture would instead currently tell us is, always and only, the entirety of the scope for ‘the architecture of the enterprise’:

It might at first glance look much the same as that previous graphic. But in practice, to be blunt, it represents a disastrous scope-error – a colossal failure to think.

The reason why it’s an error is that it’s hardwired to a single scope by arbitrary assumptions about content and context – and yet people purport that it can be used as a pattern that can apply everywhere. Fact is, it can’t: it cannot and must not be used as a general-purpose pattern, because it’s hardwired to a single type of context with a single type of content. It just-about works if and only if we arbitrarily constrain the scope of both content and context to IT-only: everything not-IT is forcibly bundled into a random, largely-undifferentiated grab-box labelled ‘the business’, most of which it then proceeds to ignore. Anything that is not-IT, or not directly related to IT, becomes filtered-out of view, invisible. Not A Good Idea…

If we do try to use it in a pattern-like way, here’s what happens. We start off with what looks reasonable enough, if and only if the primary focus for our architecture is IT-hardware:

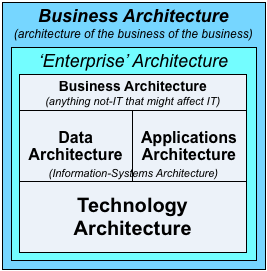

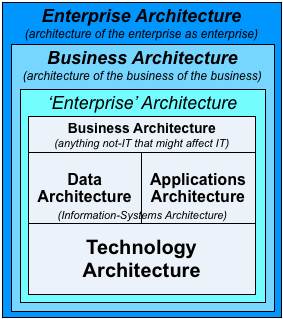

But if we try to stretch the same construct to look at ‘the business of the business’ – rather than ‘business’ as ‘anything not-IT that might affect IT’ – then the whole thing starts to break down, because its base is still rigidly anchored to that hard-wired view of IT-hardware, ‘Technology-Architecture’:

And if we’d want to extend the architecture still further to look at the context for the business itself, then this is what happens:

If you haven’t yet seen what’s wrong with that, look at it again: we have business-architecture both inside and outside of enterprise-architecture, which is itself both inside and outside business-architecture.

In other words, a chaotically-confusing muddle of mislabelling and more.

And remember, at each of these levels, it’s still focussed primarily on IT-hardware (‘Technology Architecture’). If something doesn’t ultimately interact in some way with that IT-hardware, it may as well not exist – as far as visibility is concerned, anyway. Which, as before, is Not A Good Idea…

Worse, this pseudo-pattern’s concept of layering embeds a truly horrible set of arbitrary conflations:

- ‘the business’ = anything-human + anything-relational + principle-based (‘vision/values’) decisions + higher-order abstraction + intent (plus anything ‘not-IT’)

- ‘IT apps and data’ = anything-computational + anything-informational + ‘truth-based’ (algorithmic) decisions + virtual (lower-order ‘logical’ abstraction; all IT-only)

- ‘hardware’ = anything-mechanical + anything-physical + physical-law (‘rule-based’) decisions + concrete (‘physical’/tangible abstraction; all IT-only)

Think about those conflations for a moment. For example, where in this categorisation-scheme would you put a physical machine? Short-answer: it must go in ‘hardware – because it’s physical – but also in ‘the business’ – because it’s not-IT – both at the same time. This is Not A Good Idea…

Where would you place a human-based process that does exactly the same as an IT-based process (such as in a substitute process in disaster-recovery and the like)? Short-answer: ‘the business’ – because it’s not-IT. So even though business-continuity requirements and suchlike will mandate that we must be able to view those processes as the same, this structure forces us to describe the same process in completely different ways, depending on how we’ve chosen to implement and operate it at the present time. This is Not A Good Idea…

In short, it’s a mess.

A mess that no-one should have allowed to continue on for more than the ten minutes or so that it’s taken you to read this. I trust that should be obvious to you by now, yes?

Now imagine what kind of an even worse mess we would create if we tried to build an architecture-notation on top of that shambles. For example, try using that notation to describe a data-centre: we could describe the servers, and the apps that run on them, but not why we’d run those apps. We could describe the routers, and even the cables, but not the power-supply or the cooling-system – or how those come into the building, from where, and why. We could describe the servers, and even the identifiers for the racks they’re seated in, their virtual locations, but not the racks themselves, or their physical locations – or the building that they’re in. In real-world practice, for a real whole-of-enterprise context, that kind of half-assed, half-complete architecture would be almost worse than no architecture at all.

No-one would get it that wrong, would they? But that’s exactly what they did.

Not A Good Idea…

Given that, now imagine that we try to apply the same shambles to create a purported reference-architecture for healthcare. We’d end up with a supposed ‘standard’ that could perhaps describe some aspects of healthcare-IT – but not healthcare itself. We could describe the sensors attached to a patient, and the apps that use the data from those sensors – but not the illness being monitored, or the criteria for the selection of those particular sensors. In fact we could barely even describe the patient to whom those sensors are attached – let alone the patient’s family, or other factors crucial to the patient’s recovery and health. We could describe data about medicines, but not the medicines themselves; we could describe the IT in an operating-theatre, but not the operating-theatre itself – nor the skills and non-IT equipment that the surgical and nursing staff would use within that operating-theatre. As an architecture-framework for that context, it’d be so incomplete as to be laughable – and potentially deadly, too, in an all too literal sense.

No-one would get it that wrong, would they? But that’s exactly what they did.

Not A Good Idea…

That one same massive mistake, applied over and over, again and again, in industry after industry, year after year. And the people involved then wonder why it doesn’t give them the results they expect or need.

Huh…?

And yeah, you’d perhaps understand now why I get a bit grumpy about that kind of cluelessness.

Particularly as I’m the one who’s then told he’s ‘wrong’, for the ‘sin’ of having pointed out just how clueless it is.

Bah…

(Describing others’ thinking as “cluelessness”? – that’s a bit arrogant, isn’t it?

Short-answer: yes. Probably. But I’ve run right out of patience and tolerance for that level of wilful incompetence.

Besides, it’s worse than mere cluelessness now. A lot worse. Oh well.)

So think for a while about the lack of thinking that’s necessary to make those kinds of scope-errors – errors so blatant that no-one should have missed them.

What was missed?

How was it missed?

What were the checks that were missed, to test against arbitrary assumptions that were invalid in that arena?

What were the checks that were not applied to the thinking itself?

What would you do to try to warn those people about those scope-errors, those thinking-errors?

And what response would you expect, to your attempts to prevent a disastrous mistake being made?

In my experience, don’t expect a ‘Thank you’ from such people. Quite the opposite, more like. Oh well.

Fact is that they’ll get it only when they get it – and not a moment before. No matter how much damage that cluelessness causes along the way.

But somehow – somehow – we have to find a way to reduce the damage that that cluelessness can cause. Preferably before the damage occurs…

Oh, and it’s not just in the IT domains that this occurs – it’s everywhere.

Every darn field that I’ve ever worked in has been riddled with the same kinds of cluelessness.

Why?

Why?

In which case, how do we do it right?

One good place to start would be some long, painful self-study of the Seven Sins of Dubious Discipline: the Hype Hubris; the Golden-Age Game; the Newage Nuisance; the Meaning Mistake; the Possession Problem; the Reality Risk; and Lost In The Learning Labyrinth. And also explore how these same sins show up in the everyday world that we’re ‘sold’ every day…

(Don’t worry: everyone falls into these mistakes from time to time. I certainly do. But it’s only if we know about these mistakes, and be honest with ourselves about how and when we fall into them, that we can start to do something about it…)

Another place might be where I started out on this, almost half a century ago, trying to make some sense of method:

Just about everyone seems to start from method, from a purported “This is The Way To Do It”.

Yet that isn’t the way that it works – other than in specific special-cases. (We’ll get back to what that ‘specific’ actually is in a moment.) Without some extra understanding that all-too-often is all-but-entirely absent, that ‘methods-first’ approach will fail. For example, it’s why so-called ‘best-practices’ so often turn out in practice to be best-practice only for the people who developed that ‘best-practice’ in the first place.

Why?

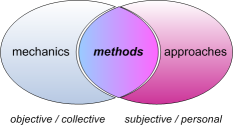

It’s because a viable method for any given context arises from a context-specific mix of the mechanics – the elements that that are the same for everyone and/or everything, everywhere in that context – and the approaches – the elements that vary within that context.

Oh, and whenever real people are involved, we’ll probably need to include all of their distinct drivers too – both as individuals, and as groups of individuals:

So, the right method for any point in any context, at any moment in that context, sits at an intersection of that which is the same everywhere in that context, that which is specific to that part of that context, and the drivers that apply for the people in that part of the context.

The mechanics – ‘that which is the same everywhere’ – will be the same for every method in that context, by definition.

But the approaches – ‘that which is specific to that part of the context’ – and the drivers – people’s individual concerns – either may, will or must be different for each person, and/or across each part of the context.

And even the mechanics of the context can change if we shift to a different context, a different type of context. Such as we’ll often get, for example, with ‘best-practices’ and suchlike.

Which tells us that ‘method-first’ can only work when the everything in the new context is exactly the same as in the specific part of context from which that method was derived.

Which, in the real-world, is rarely the case.

Which tell us that any ‘method-first’ approach – such as the notion that an ‘enterprise’-architecture framework centred solely around IT-hardware is applicable everywhere, for every type of context – is unlikely to be successful in any context other than that for which it was first developed. And the further we shift from that initial context, the less valid it necessarily becomes. In other words, Not A Good Idea…

The right way round – the only way that works – is to start from a context-neutral pattern; identify the mechanics, approaches and drivers for that context; and only then build a context-specific frame for content and action.

Yet for far too many people I meet – perhaps particularly the IT-obsessives who still infest the ‘enterprise’-architecture space – that bald fact above about inherent inapplicability still remains utterly incomprehensible. Instead, they still cling to the same disastrously-dysfunctional frame, and still try force-fit every context to it, in classical Procrustean style – even though they know, from every scrap of evidence we now have, that doing so simply does not work.

Why?

Why?

I give up.

I’ll admit it: I simply cannot understand why so many people would so wilfully avoid and abandon almost any form of rigour, any form of discipline, simply to protect half-assed non-thinking, relentlessly misapplied in known-inappropriate contexts, that all but invariably leads to disastrous outcomes, and is, almost in every case, plainly Not A Good Idea.

All that I’m left with right now is the bleak words of singer / songwriter Roy Harper, rather too many years ago:

“You can lead a horse to water,

but you’re never going to make it drink;

you can lead a man to slaughter

but you’re never going to make him think…”

I know I’m not a good thinker.

I know that.

Which is why I try to improve on that every day.

Yet if Roy Harper is right, there’s nothing I can do that so many people will still wilfully choose to be even worse at thinking than I am. And wilfully choose to do nothing to improve on it, either.

I hope, almost beyond hope now, that you can do better than that…

And that you can do better than me, too. A lot better.

Because if you can’t and don’t, we’re dead.

Tom, do you have a short and simple answer to why it is “not a good idea” to consider an organisation (which is a fractal concept) as an enterprise?

Many thanks, Peter.

The shortest answer is that ‘organisation’ is ‘how’, whereas ‘enterprise’ is ‘why’ – and I think you’d agree that confusing ‘how’ for ‘why’ is not a good idea?

(More on that in the post ‘Organisation and enterprise as ‘how’ and ‘why’’, http://weblog.tetradian.com/2014/10/06/organisation-and-enterprise-as-how-and-why/ )

The somewhat longer answer is that, as I see it, they’re different fractal entities with different bounding-conditions: ‘organisation’ is bounded by rules, roles and responsibilities (i.e. ‘how’-oriented), whereas ‘enterprise’ is bounded by vision, values and commitments (i.e. ‘why’-oriented). The two sets of boundaries _can_ coincide, but it’s not useful to do that: ‘organisation=enterprise’ is an organisation that is almost literally talking solely to itself, with nothing beyond itself with which to either transact or interact. For most purposes, I’ve found that the most useful distinction is that for a given organisation in scope, the respective enterprise in scope should be three (fractal) steps larger: 0=same as organisation, 1=transaction-space (i.e. SIPOC etc), 2=direct-interaction space (‘the market’), 3=indirect-interaction space (e.g. families, country, anticlients etc – ‘shared-enterprise’).

(More on that in the post ‘Organisation and enterprise’, http://weblog.tetradian.com/2014/10/06/organisation-and-enterprise-as-how-and-why/ )

I hope that helps / makes-sense?

Thanks for the response and for the links.

I hold a different conceptualisation but I think our different conceptualisations successfully attend to all the factors you have nominated. My interest was in testing sufficiency of my own metamodel which I knew to be different to yours.